Methods of Offering Knowledge to a Mannequin

Many organizations at the moment are exploring the facility of generative AI to enhance their effectivity and acquire new capabilities. Generally, to totally unlock these powers, AI will need to have entry to the related enterprise knowledge. Giant Language Fashions (LLMs) are skilled on publicly out there knowledge (e.g. Wikipedia articles, books, net index, and so forth.), which is sufficient for a lot of general-purpose purposes, however there are many others which are extremely depending on personal knowledge, particularly in enterprise environments.

There are three major methods to offer new knowledge to a mannequin:

- Pre-training a mannequin from scratch. This hardly ever is sensible for many firms as a result of it is vitally costly and requires a whole lot of sources and technical experience.

- Advantageous-tuning an current general-purpose LLM. This could scale back the useful resource necessities in comparison with pre-training, however nonetheless requires vital sources and experience. Advantageous-tuning produces specialised fashions which have higher efficiency in a website for which it’s finetuned for however might have worse efficiency in others.

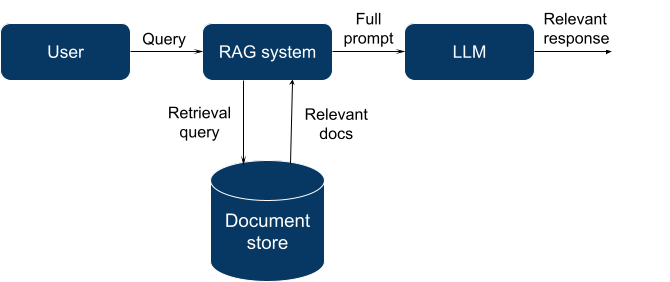

- Retrieval augmented technology (RAG). The thought is to fetch knowledge related to a question and embody it within the LLM context in order that it might “floor” its personal outputs in that data. Such related knowledge on this context is known as “grounding knowledge”. RAG enhances generic LLM fashions, however the quantity of knowledge that may be offered is restricted by the LLM context window dimension (quantity of textual content the LLM can course of without delay, when the knowledge is generated).

At the moment, RAG is essentially the most accessible approach to offer new data to an LLM, so let’s deal with this technique and dive a little bit deeper.

Retrieval Augmented Technology

Typically, RAG means utilizing a search or retrieval engine to fetch a related set of paperwork for a specified question.

For this function, we will use many current programs: a full-text search engine (like Elasticsearch + conventional data retrieval methods), a general-purpose database with a vector search extension (Postgres with pgvector, Elasticsearch with vector search plugin), or a specialised database that was created particularly for vector search.

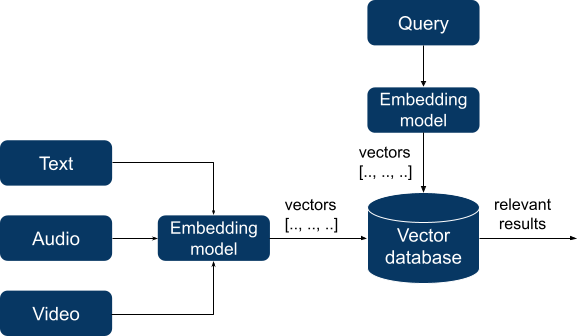

In two latter instances, RAG is much like semantic search. For a very long time, semantic search was a extremely specialised and sophisticated area with unique question languages and area of interest databases. Indexing knowledge required in depth preparation and constructing data graphs, however current progress in deep studying has dramatically modified the panorama. Trendy semantic search purposes now rely on embedding fashions that efficiently study semantic patterns in introduced knowledge. These fashions take unstructured knowledge (textual content, audio, and even video) as enter and remodel them into vectors of numbers of a hard and fast size, thus turning unstructured knowledge right into a numeric type that may very well be used for calculations Then it turns into attainable to calculate the gap between vectors utilizing a selected distance metric, and the ensuing distance will replicate the semantic similarity between vectors and, in flip, between items of authentic knowledge.

These vectors are listed by a vector database and, when querying, our question can also be reworked right into a vector. The database searches for the N closest vectors (in line with a selected distance metric like cosine similarity) to a question vector and returns them.

A vector database is chargeable for these 3 issues:

- Indexing. The database builds an index of vectors utilizing some built-in algorithm (e.g. locality-sensitive hashing (LSH) or hierarchical navigable small world (HNSW)) to precompute knowledge to hurry up querying.

- Querying. The database makes use of a question vector and an index to seek out essentially the most related vectors in a database.

- Submit-processing. After the consequence set is shaped, generally we’d wish to run an extra step like metadata filtering or re-ranking throughout the consequence set to enhance the result.

The aim of a vector database is to offer a quick, dependable, and environment friendly technique to retailer and question knowledge. Retrieval velocity and search high quality could be influenced by the collection of index kind. Along with the already talked about LSH and HNSW there are others, every with its personal set of strengths and weaknesses. Most databases make the selection for us, however in some, you may select an index kind manually to regulate the tradeoff between velocity and accuracy.

At DataRobot, we consider the method is right here to remain. Advantageous-tuning can require very refined knowledge preparation to show uncooked textual content into training-ready knowledge, and it’s extra of an artwork than a science to coax LLMs into “studying” new info by way of fine-tuning whereas sustaining their common data and instruction-following conduct.

LLMs are usually superb at making use of data provided in-context, particularly when solely essentially the most related materials is offered, so a very good retrieval system is essential.

Notice that the selection of the embedding mannequin used for RAG is crucial. It’s not part of the database and selecting the proper embedding mannequin in your software is crucial for attaining good efficiency. Moreover, whereas new and improved fashions are consistently being launched, altering to a brand new mannequin requires reindexing your whole database.

Evaluating Your Choices

Selecting a database in an enterprise atmosphere is just not a straightforward process. A database is commonly the guts of your software program infrastructure that manages an important enterprise asset: knowledge.

Usually, after we select a database we wish:

- Dependable storage

- Environment friendly querying

- Skill to insert, replace, and delete knowledge granularly (CRUD)

- Arrange a number of customers with varied ranges of entry for them (RBAC)

- Knowledge consistency (predictable conduct when modifying knowledge)

- Skill to recuperate from failures

- Scalability to the dimensions of our knowledge

This listing is just not exhaustive and is perhaps a bit apparent, however not all new vector databases have these options. Usually, it’s the availability of enterprise options that decide the ultimate selection between a widely known mature database that gives vector search by way of extensions and a more moderen vector-only database.

Vector-only databases have native help for vector search and may execute queries very quick, however typically lack enterprise options and are comparatively immature. Remember that it takes years to construct advanced options and battle-test them, so it’s no shock that early adopters face outages and knowledge losses. Alternatively, in current databases that present vector search by way of extensions, a vector is just not a first-class citizen and question efficiency could be a lot worse.

We’ll categorize all present databases that present vector search into the next teams after which talk about them in additional element:

- Vector search libraries

- Vector-only databases

- NoSQL databases with vector search

- SQL databases with vector search

- Vector search options from cloud distributors

Vector search libraries

Vector search libraries like FAISS and ANNOY usually are not databases – quite, they supply in-memory vector indices, and solely restricted knowledge persistence choices. Whereas these options usually are not supreme for customers requiring a full enterprise database, they’ve very quick nearest neighbor search and are open supply. They provide good help for high-dimensional knowledge and are extremely configurable (you may select the index kind and different parameters).

General, they’re good for prototyping and integration in easy purposes, however they’re inappropriate for long-term, multi-user knowledge storage.

Vector-only databases

This group consists of numerous merchandise like Milvus, Chroma, Pinecone, Weaviate, and others. There are notable variations amongst them, however all of them are particularly designed to retailer and retrieve vectors. They’re optimized for environment friendly similarity search with indexing and help high-dimensional knowledge and vector operations natively.

Most of them are newer and won’t have the enterprise options we talked about above, e.g. a few of them don’t have CRUD, no confirmed failure restoration, RBAC, and so forth. For essentially the most half, they will retailer the uncooked knowledge, the embedding vector, and a small quantity of metadata, however they will’t retailer different index varieties or relational knowledge, which implies you’ll have to use one other, secondary database and keep consistency between them.

Their efficiency is commonly unmatched and they’re a very good choice when having multimodal knowledge (photos, audio or video).

NoSQL databases with vector search

Many so-called NoSQL databases lately added vector search to their merchandise, together with MongoDB, Redis, neo4j, and ElasticSearch. They provide good enterprise options, are mature, and have a robust group, however they supply vector search performance by way of extensions which could result in lower than supreme efficiency and lack of first-class help for vector search. Elasticsearch stands out right here as it’s designed for full-text search and already has many conventional data retrieval options that can be utilized along side vector search.

NoSQL databases with vector search are a good selection when you’re already invested in them and want vector search as an extra, however not very demanding function.

SQL databases with vector search

This group is considerably much like the earlier group, however right here we now have established gamers like PostgreSQL and ClickHouse. They provide a wide selection of enterprise options, are well-documented, and have robust communities. As for his or her disadvantages, they’re designed for structured knowledge, and scaling them requires particular experience.

Their use case can also be comparable: good selection when you have already got them and the experience to run them in place.

Vector search options from cloud distributors

Hyperscalers additionally supply vector search companies. They often have primary options for vector search (you may select an embedding mannequin, index kind, and different parameters), good interoperability inside the remainder of the cloud platform, and extra flexibility in the case of price, particularly for those who use different companies on their platform. Nevertheless, they’ve completely different maturity and completely different function units: Google Cloud vector search makes use of a quick proprietary index search algorithm referred to as ScaNN and metadata filtering, however is just not very user-friendly; Azure Vector search gives structured search capabilities, however is in preview section and so forth.

Vector search entities could be managed utilizing enterprise options of their platform like IAM (Identification and Entry Administration), however they aren’t that easy to make use of and suited to common cloud utilization.

Making the Proper Alternative

The principle use case of vector databases on this context is to offer related data to a mannequin. In your subsequent LLM challenge, you may select a database from an current array of databases that supply vector search capabilities by way of extensions or from new vector-only databases that supply native vector help and quick querying.

The selection is dependent upon whether or not you want enterprise options, or high-scale efficiency, in addition to your deployment structure and desired maturity (analysis, prototyping, or manufacturing). One also needs to contemplate which databases are already current in your infrastructure and whether or not you’ve multimodal knowledge. In any case, no matter selection you’ll make it’s good to hedge it: deal with a brand new database as an auxiliary storage cache, quite than a central level of operations, and summary your database operations in code to make it straightforward to regulate to the subsequent iteration of the vector RAG panorama.

How DataRobot Can Assist

There are already so many vector database choices to select from. They every have their execs and cons – nobody vector database can be proper for your whole group’s generative AI use instances. That’s the reason it’s essential to retain optionality and leverage an answer that permits you to customise your generative AI options to particular use instances, and adapt as your wants change or the market evolves.

The DataRobot AI Platform permits you to carry your personal vector database – whichever is correct for the answer you’re constructing. If you happen to require adjustments sooner or later, you may swap out your vector database with out breaking your manufacturing atmosphere and workflows.

Concerning the writer

Nick Volynets is a senior knowledge engineer working with the workplace of the CTO the place he enjoys being on the coronary heart of DataRobot innovation. He’s serious about massive scale machine studying and keen about AI and its influence.