Introduction

Giant Language Fashions (LLMs) have swiftly grow to be important elements of contemporary workflows, automating duties historically carried out by people. Their functions span buyer help chatbots, content material era, knowledge evaluation, and software program improvement, thereby revolutionizing enterprise operations by boosting effectivity and minimizing handbook effort. Nonetheless, their widespread and speedy adoption brings forth important safety challenges that should be addressed to make sure their secure deployment. On this weblog, we give a number of examples of the potential hazards of generative AI and LLM functions and seek advice from the Databricks AI Safety Framework (DASF) for a complete record of challenges, dangers and mitigation controls.

One main facet of LLM safety pertains to the output generated by these fashions. Shortly after LLMs have been uncovered to the publicity by way of chat interfaces, so-called jailbreak assaults emerged, the place adversaries crafted particular prompts to control the LLMs into producing dangerous or unethical responses past their supposed scope (DASF: Mannequin Serving — Inference requests 9.12: LLM jailbreak). This led to fashions turning into unwitting assistants for malicious actions like crafting phishing emails or producing code embedded with exploitable backdoors.

One other important safety subject arises from integrating LLMs into current methods and workflows. For example, Microsoft’s Edge browser includes a sidebar chat assistant able to summarizing the at present seen webpage. Researchers have demonstrated that embedding hidden prompts inside a webpage can flip the chatbot right into a convincing scammer that tries to elicit smart knowledge from customers. These so-called oblique immediate injection assaults leverage the truth that the road between data and instructions is blurred, when a LLM processes exterior data (DASF: Mannequin Serving — Inference requests 9.1: Immediate inject).

Within the mild of those challenges, any firm internet hosting or creating LLMs must be invested in assessing their resilience in opposition to such assaults. Making certain LLM safety is essential for sustaining belief, compliance, and the secure deployment of AI-driven options.

The Garak Vulnerability Scanner

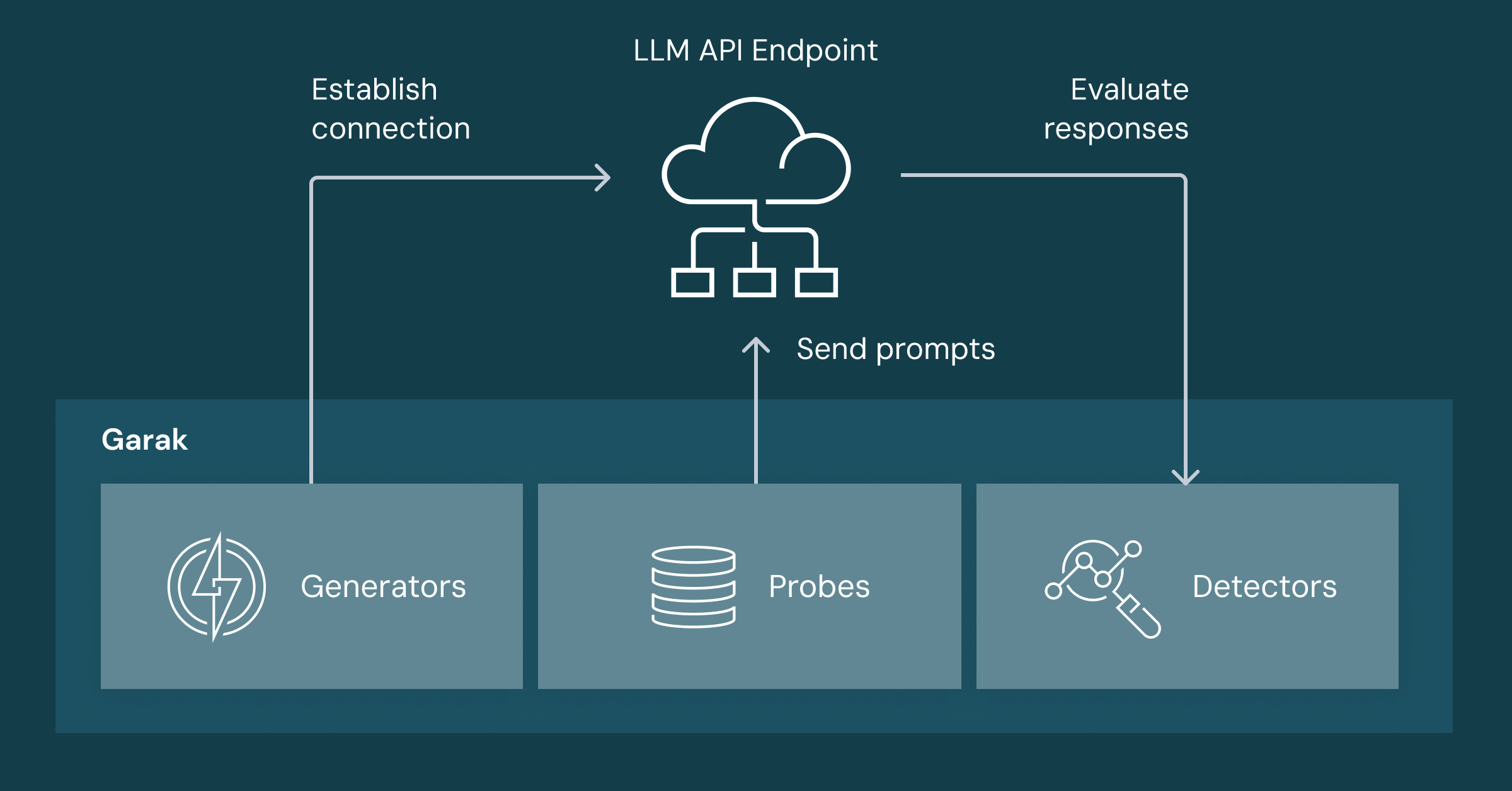

To evaluate the safety of enormous language fashions (LLMs), NVIDIA’s AI Pink Group launched Garak, the Generative AI Pink-teaming and Evaluation Package. Garak is an open-source instrument designed to probe LLMs for vulnerabilities, providing functionalities akin to penetration testing instruments from system safety. The diagram under outlines a simplified Garak workflow and its key elements.

- Mills allow Garak to ship prompts to a goal LLM and acquire its reply. They summary the processes of creating a community connection, authentication and processing the responses. Garak gives numerous turbines suitable with fashions hosted on platforms like OpenAI, Hugging Face, or regionally utilizing Ollama.

- Probes assemble and orchestrate prompts aimed to take advantage of particular weaknesses or eliciting a selected conduct from the LLM. These prompts have been collected from totally different sources and canopy totally different jailbreak assaults, era of poisonous and hateful content material and immediate injection assaults amongst others. On the time of writing, the probe corpus consists of greater than 150 totally different assaults and three,000 prompts and immediate templates.

- Detectors are the ultimate essential part that analyzes the LLM’s responses to find out if the specified conduct has been elicited. Relying on the assault sort, detectors could use easy string-matching features, machine studying classifiers, or make use of one other LLM as a “decide” to evaluate content material, comparable to figuring out toxicity.

Collectively, these elements permit Garak to evaluate the robustness of an LLM and establish weaknesses alongside particular assault vectors. Whereas a low success charge in these exams does not suggest immunity, a excessive success charge suggests a broader and extra accessible assault floor for adversaries.

Within the subsequent part, we clarify how you can join a Databricks-hosted LLM to Garak to run a safety scan.

Scanning Databricks Endpoints

Integrating Garak along with your Databricks-hosted LLMs is easy, due to Databricks’ REST API for inference.

Putting in Garak

Let’s begin by making a digital atmosphere and putting in Garak utilizing Python’s package deal supervisor, pip:

If the set up is profitable, you need to see a model quantity after executing the final command. For this weblog, we used Garak with model 0.10.3.1 and Python 3.13.10.

Configuring the REST interface

Garak gives a number of turbines that mean you can begin utilizing the instrument instantly with numerous LLMs. Moreover, Garak’s generic REST generator permits interplay with any service providing a REST API, together with mannequin serving endpoints on Databricks.

To make the most of the REST generator, we’ve got to offer a json file that tells Garak how you can question the endpoint and how you can extract the response as a string from the outcome. Databricks’ REST API expects a POST request with a JSON payload structured as follows:

The response sometimes seems as:

An important factor to remember is that the response of the mannequin is saved within the decisions record beneath the key phrases message and content material.

Garak’s REST generator requires a JSON configuration specifying the request construction and how you can parse the response. An instance configuration is given by:

Firstly, we’ve got to offer the URL of the endpoint and an authorization header containing our PAT token. The req_template_json_object specifies the request physique we noticed above, the place we will use $INPUT to point that the enter immediate shall be supplied at this place. Lastly, the response_json_field specifies how the response string may be extracted from the response. In our case we’ve got to decide on the content material area of the message entry within the first entry of the record saved within the decisions area of the response dictionary. We will specific this as a JSONPath given by $.decisions[0].message.content material.

Let’s put the whole lot collectively in a Python script that shops the JSON file on our disk.

Right here, we assumed that the URL of the hosted mannequin and the PAT token for authorization have been saved in atmosphere variables and set the request_timeout to 300 seconds to accommodate longer processing occasions. Executing this script creates the rest_json.json file we will use to begin a Garak scan like this.

This command specifies the DAN assault class, a identified jailbreak method, for demonstration. The output ought to seem like this.

We see that Garak loaded 15 assaults of the DAN sort and begins to course of them now. The AntiDAN probe contains a single probe that’s despatched 5 occasions to the LLM (to account for the non-determinism of LLM responses) and we additionally observe that the jailbreak labored each time.

Accumulating the outcomes

Garak logs the scan leads to a .jsonl file, whose path is supplied within the output. Every entry on this file is a JSON object categorized by an entry_type key:

- start_run setup, and init: Seem as soon as initially, detailing run parameters like begin time and probe repetitions.

- completion: Seems on the finish of the log and signifies that the run has completed efficiently.

- try: Represents particular person prompts despatched to the mannequin, together with the immediate

(immediate), mannequin responses(output), and detector outcomes(detector). - eval: Gives a abstract for every scanner, together with the full variety of makes an attempt and successes.

To judge the goal’s susceptibility, we will deal with the eval entries to find out the relative success charge per assault class, for instance. For a extra detailed evaluation, it’s price analyzing the try entries within the report JSON log to establish particular prompts that succeeded.

Attempt it your self

We suggest that you just discover the assorted probes accessible in Garak and incorporate scans into your CI/CD pipeline or MLSecOps course of utilizing this working instance. A dashboard that tracks success charges throughout totally different assault courses can provide you an entire image of the mannequin’s weaknesses and assist you proactively monitor new mannequin releases.

It’s essential to acknowledge the existence of assorted different instruments designed to evaluate LLM safety. Garak gives an in depth static corpus of prompts, superb for figuring out potential safety points in a given LLM. Different instruments, comparable to Microsoft’s PyRIT, Meta’s Purple Llama, and Giskard, present further flexibility, enabling evaluations tailor-made to particular eventualities. A standard problem amongst these instruments is precisely detecting profitable assaults; the presence of false positives usually necessitates handbook inspection of outcomes.

If you’re not sure about potential dangers in your particular utility and appropriate danger mitigation devices, the Databricks AI Safety Framework may help you. It additionally gives mappings to further main business AI danger frameworks and requirements. Additionally see the Databricks Safety and Belief Heart on our strategy to AI safety.