|

Take heed to this text |

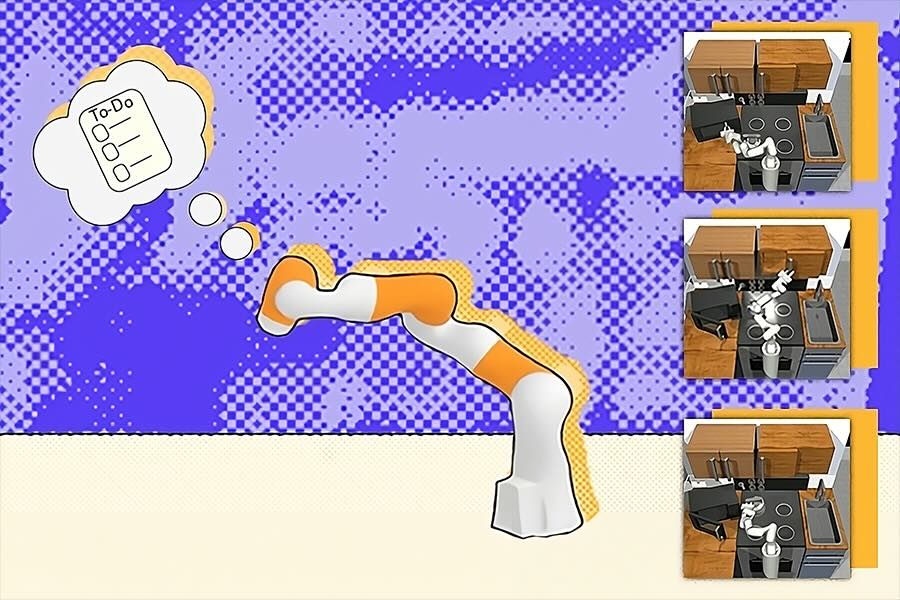

The HiP framework develops detailed plans for robots utilizing the experience of three completely different basis fashions, serving to it execute duties in households, factories, and development that require a number of steps. | Credit score: Alex Shipps/MIT CSAIL

Your every day to-do checklist is probably going fairly easy: wash the dishes, purchase groceries, and different trivialities. It’s unlikely you wrote out “decide up the primary soiled dish,” or “wash that plate with a sponge,” as a result of every of those miniature steps inside the chore feels intuitive. Whereas we are able to routinely full every step with out a lot thought, a robotic requires a fancy plan that includes extra detailed outlines.

MIT’s Unbelievable AI Lab, a bunch inside the Pc Science and Synthetic Intelligence Laboratory (CSAIL), has supplied these machines a serving to hand with a brand new multimodal framework: Compositional Basis Fashions for Hierarchical Planning (HiP), which develops detailed, possible plans with the experience of three completely different basis fashions. Like OpenAI’s GPT-4, the inspiration mannequin that ChatGPT and Bing Chat have been constructed upon, these basis fashions are educated on large portions of information for purposes like producing photographs, translating textual content, and robotics.

Not like RT2 and different multimodal fashions which can be educated on paired imaginative and prescient, language, and motion information, HiP makes use of three completely different basis fashions every educated on completely different information modalities. Every basis mannequin captures a special a part of the decision-making course of after which works collectively when it’s time to make selections. HiP removes the necessity for entry to paired imaginative and prescient, language, and motion information, which is troublesome to acquire. HiP additionally makes the reasoning course of extra clear.

What’s thought of a every day chore for a human could be a robotic’s “long-horizon aim” — an overarching goal that includes finishing many smaller steps first — requiring ample information to plan, perceive, and execute aims. Whereas laptop imaginative and prescient researchers have tried to construct monolithic basis fashions for this downside, pairing language, visible, and motion information is pricey. As a substitute, HiP represents a special, multimodal recipe: a trio that cheaply incorporates linguistic, bodily, and environmental intelligence right into a robotic.

Submit your nominations for innovation awards within the 2024 RBR50 awards.

Submit your nominations for innovation awards within the 2024 RBR50 awards.

“Basis fashions do not need to be monolithic,” mentioned NVIDIA AI researcher Jim Fan, who was not concerned in the paper. “This work decomposes the advanced activity of embodied agent planning into three constituent fashions: a language reasoner, a visible world mannequin, and an motion planner. It makes a troublesome decision-making downside extra tractable and clear.”

The group believes that their AI system might assist these machines accomplish family chores, resembling placing away a e-book or inserting a bowl within the dishwasher. Moreover, HiP might help with multistep development and manufacturing duties, like stacking and inserting completely different supplies in particular sequences.

Evaluating HiP

The CSAIL group examined HiP’s acuity on three manipulation duties, outperforming comparable frameworks. The system reasoned by growing clever plans that adapt to new data.

First, the researchers requested that it stack different-colored blocks on one another after which place others close by. The catch: A few of the appropriate colours weren’t current, so the robotic needed to place white blocks in a shade bowl to color them. HiP typically adjusted to those modifications precisely, particularly in comparison with state-of-the-art activity planning methods like Transformer BC and Motion Diffuser, by adjusting its plans to stack and place every sq. as wanted.

One other check: arranging objects resembling sweet and a hammer in a brown field whereas ignoring different objects. A few of the objects it wanted to maneuver have been soiled, so HiP adjusted its plans to put them in a cleansing field, after which into the brown container. In a 3rd demonstration, the bot was in a position to ignore pointless objects to finish kitchen sub-goals resembling opening a microwave, clearing a kettle out of the best way, and turning on a lightweight. A few of the prompted steps had already been accomplished, so the robotic tailored by skipping these instructions.

A 3-pronged hierarchy

HiP’s three-pronged planning course of operates as a hierarchy, with the flexibility to pre-train every of its elements on completely different units of information, together with data exterior of robotics. On the backside of that order is a big language mannequin (LLM), which begins to ideate by capturing all of the symbolic data wanted and growing an summary activity plan. Making use of the frequent sense information it finds on the web, the mannequin breaks its goal into sub-goals. For instance, “making a cup of tea” turns into “filling a pot with water,” “boiling the pot,” and the next actions required.

“All we need to do is take present pre-trained fashions and have them efficiently interface with one another,” says Anurag Ajay, a PhD pupil within the MIT Division of Electrical Engineering and Pc Science (EECS) and a CSAIL affiliate. “As a substitute of pushing for one mannequin to do every part, we mix a number of ones that leverage completely different modalities of web information. When utilized in tandem, they assist with robotic decision-making and may doubtlessly support with duties in houses, factories, and development websites.”

These AI fashions additionally want some type of “eyes” to grasp the setting they’re working in and appropriately execute every sub-goal. The group used a big video diffusion mannequin to enhance the preliminary planning accomplished by the LLM, which collects geometric and bodily details about the world from footage on the web. In flip, the video mannequin generates an remark trajectory plan, refining the LLM’s define to include new bodily information.

This course of, often known as iterative refinement, permits HiP to purpose about its concepts, taking in suggestions at every stage to generate a extra sensible define. The circulate of suggestions is just like writing an article, the place an creator could ship their draft to an editor, and with these revisions integrated in, the writer evaluations for any final modifications and finalizes.

On this case, the highest of the hierarchy is an selfish motion mannequin, or a sequence of first-person photographs that infer which actions ought to happen based mostly on its environment. Throughout this stage, the remark plan from the video mannequin is mapped over the house seen to the robotic, serving to the machine resolve the right way to execute every activity inside the long-horizon aim. If a robotic makes use of HiP to make tea, this implies it should have mapped out precisely the place the pot, sink, and different key visible parts are, and start finishing every sub-goal.

Nonetheless, the multimodal AI work is restricted by the dearth of high-quality video basis fashions. As soon as accessible, they might interface with HiP’s small-scale video fashions to additional improve visible sequence prediction and robotic motion technology. A better-quality model would additionally cut back the present information necessities of the video fashions.

That being mentioned, the CSAIL group’s strategy solely used a tiny bit of information general. Furthermore, HiP was low-cost to coach and demonstrated the potential of utilizing available basis fashions to finish long-horizon duties.

“What Anurag has demonstrated is proof-of-concept of how we are able to take fashions educated on separate duties and information modalities and mix them into fashions for robotic planning. Sooner or later, HiP might be augmented with pre-trained fashions that may course of contact and sound to make higher plans,” mentioned senior creator Pulkit Agrawal, MIT assistant professor in EECS and director of the Unbelievable AI Lab. The group can be contemplating making use of HiP to fixing real-world long-horizon duties in robotics.

Editor’s Observe: This text was republished from MIT Information.