Up to now few weeks, a number of “autonomous background coding brokers” have been launched.

- Supervised coding brokers: Interactive chat brokers which can be pushed and steered by a developer. Create code domestically, within the IDE. Instrument examples: GitHub Copilot, Windsurf, Cursor, Cline, Roo Code, Claude Code, Aider, Goose, …

- Autonomous background coding brokers: Headless brokers that you just ship off to work autonomously via a complete activity. Code will get created in an surroundings spun up completely for that agent, and normally ends in a pull request. A few of them are also runnable domestically although. Instrument examples: OpenAI Codex, Google Jules, Cursor background brokers, Devin, …

I gave a activity to OpenAI Codex and another brokers to see what I can be taught. The next is a report of 1 explicit Codex run, that can assist you look behind the scenes and draw your individual conclusions, adopted by a few of my very own observations.

The duty

We’ve got an inner software known as Haiven that we use as a demo frontend for our software program supply immediate library, and to run some experiments with completely different AI help experiences on software program groups. The code for that software is public.

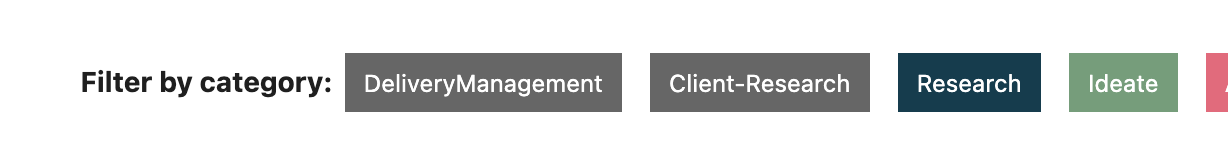

The duty I gave to Codex was concerning the next UI concern:

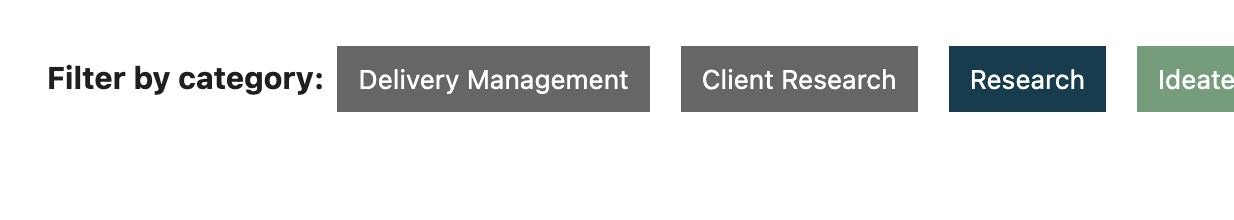

Precise:

What I wished from the agent:

Immediate: After we create the filter labels on the dashboard, we create human readable labels primarily based on the classes we've got. "client-research" is changed into "Consumer-Analysis", "deliveryManagement" into "DeliveryManagement". Enhance the sophistication of the "category-to-human-readable" logic in order that the labels are showing to the consumer as "Consumer Analysis" and "Supply Administration"

This activity is

- Comparatively small

- A typical “cosmetics” activity that isn’t tremendous pressing and would possibly get deprioritised time and again

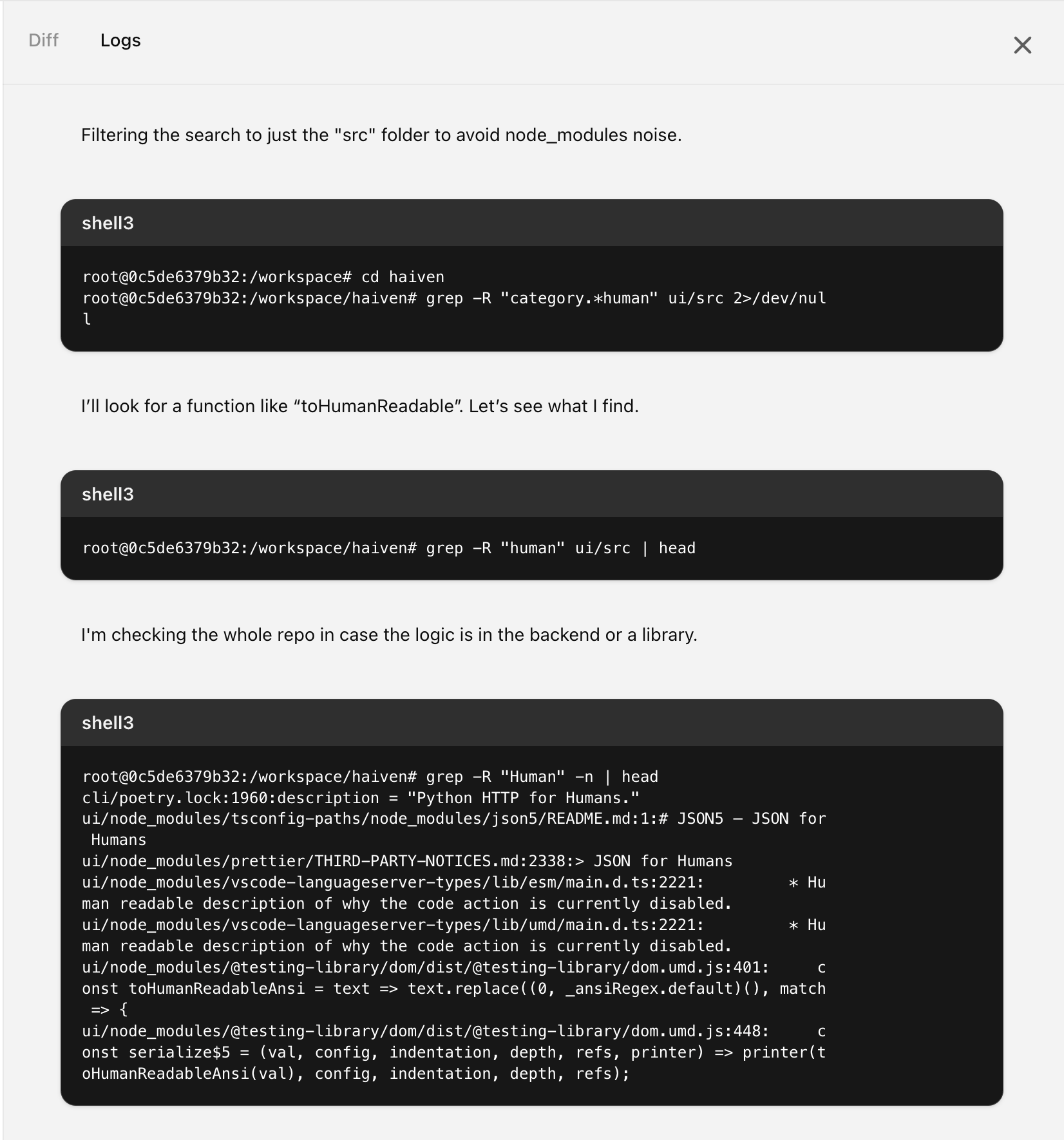

Agent logs

I’m sharing the detailed logs right here so to get an impression of what’s going on underneath the hood.

Right here’s what the logs view seems to be like in Codex:

Accumulating info first

The primary 17 log entries had been all about studying AGENTS.md and README.md:

- “I must examine the AGENTS.md within the repository root for directions.”

- seems to be for AGENTS.md, however I had truly known as it AGENT.md, which led to some backwards and forwards till it figured that out

- reads README.md

- Appears to be like at .pre-commit, finds a check setup

The next tables checklist the remainder of the log messages verbatim. After every of these messages, the agent executed a command, for a few of them I added that precise command for context. I’m positive that the log messages are simply summaries of the tokens that had been truly occurring behind the scenes, as a result of the messages are approach too brief to symbolize the total “considering” the mannequin should have been going via.

Looking for the related code with a number of grep

| Message | Command (samples) | Notes |

|---|---|---|

| I must seek for “category-to-human-readable” to find the place to make adjustments for the frontend labels. | grep -R "human readable" -n |

|

| I’ll search for any mentions of “class” within the mission. | ||

| Grep output is very large due to node_modules; I’ll restrict it to src as a substitute. | ||

| I’ll seek for “to human” within the src listing to search out related references. | ||

| I’ll open and examine index.js to see what’s inside. | ||

| I’m checking if the category-to-human-readable transformation is dealt with in _navigation_items or elsewhere. | ||

| I’m going to search for “human readable” to get extra information on the subject. | grep -R "human-readable" -n ui/src | head |

|

| I’m wondering if there’s a operate for changing classes into one thing extra readable. | ||

| Filtering the search to simply the “src” folder to keep away from node_modules noise. | second time it’s working into this, didn’t ‘bear in mind’ | |

| I’ll search for a operate like “toHumanReadable”. Let’s see what I discover. | ||

| I’m checking the entire repo in case the logic is within the backend or a library. | grep -R "Human" -n | head |

|

| I’ll restrict the scope to app/ and ui/src to keep away from the massive node_modules folder. | third time it’s working into this, didn’t ‘bear in mind’ | |

| I feel the logic is likely to be lacking, so I’ll examine _navigation_items and different modules for classes. | grep -R "human" app -n | head |

|

| I feel it’s time to look a bit deeper. Let’s dive in and discover. | grep -R "human" app | wc -l |

To “look deeper”, it searches for “human” once more, however pipes it into wc as a substitute of head? |

Lastly, it’s getting nearer! The labels had been beforehand crudely capitalized by way of CSS, it finds that

| Message | Command (samples) | Notes |

|---|---|---|

| I’m checking for references to “capitalize” or class transformation within the frontend. | Output: <br>root@0c5de6379b32:/workspace/haiven# grep -R "capitalize" -n ui/src | head<br>ui/src/pages/index.js:250: className="capital<br>ize"<br>ui/src/kinds/globals.css:1915:.capitalize {<br>ui/src/kinds/globals.css:1916: text-transform: capitalize;<br>ui/src/kinds/globals.css:1931: text-transform: capitalize;<br> |

|

| I’ll examine across the Tag part in index.js. | ||

| Tag show capitalizes phrases incorrectly on account of hyphen or camelCase; I’ll have a look at filter classes. |

Now it’s beginning to examine for extra related code within the app folder, the place the backend is. It doesn’t discover something, which is appropriate, there’s nothing related there

| Message | Command (samples) | Notes |

|---|---|---|

| I’ll examine the repo for a way classes are formatted, specializing in hyphens and camelCase. | grep -R "classes" -n app | head |

|

| Time to strive a brand new search! | grep -R "consumer" -n app | head |

|

| I’ll take a more in-depth have a look at the prompts within the check knowledge. | grep -R "classes:" -n app/assessments | head |

Why? |

| It seems to be just like the check knowledge has restricted classes. I’ll examine the docs for any variations. | grep -R "client-research" -n |

|

| I’ll begin by opening the information to take a more in-depth look. | sed -n '1,100p' ui/src/app/_navigation_items.js |

Now it’s going again to looking within the ui folder – it discovered the CSS beforehand, and is now on the lookout for present code that it might reuse to unravel the duty. That’s nice, as a result of we certainly have already got the same operate, and it finds it:

| Message | Command (samples) | Notes |

|---|---|---|

| I would like a operate to transform tags like “client-research” to “Consumer Analysis” for filters. | grep -R "to.*Readable" -n ui/src | head |

|

| I’m opening the toReadableText operate inside _dynamic_data_renderer.js to discover it. | ||

| I’m shifting via the web page to get the small print I would like. |

It now finds a spot within the present operate’s implementation:

| Message | Command (samples) | Notes |

|---|---|---|

| I see that hyphens aren’t dealt with by the operate. I would like to regulate it for hyphens. | ||

| I’ll modify toReadableText to deal with hyphens, making certain correct capitalization and spacing. Let’s examine assessments! | It truly didn’t examine any assessments | |

| I’ll examine _dynamic_data_renderer.js to look at toReadableText and modify it for hyphen-case. | ||

| I’ll examine assessments/_sidebar.check.js to overview the associated classes there. | ||

| I’ll examine different information to see if toReadableText seems anyplace. | ||

| I’ll examine if _sidebar or others include a toReadableText operate. |

Replace the check for the operate that it desires to vary:

| Message | Command (samples) | Notes |

|---|---|---|

| I’ll alter the operate to deal with hyphen-case, then replace assessments to mirror that. | ||

| I’ll examine the check file after line 36 to make sure hyphen-case is roofed. | ||

| I’ll scroll via strains 60 to 120 to look at the remainder of the file. | ||

| I’m scrolling the remainder to seize all of the related info. | ||

| I’ll add assessments for hyphen-case and replace toReadableText, together with modifying index.js for classes. | ||

| I’ll alter _dynamic_data_renderer.js and index.js to make use of toReadableText and replace assessments. | git standing --short |

You’ll be able to configure some surroundings startup instructions in Codex, I had configured that to be yarn set up, which led to the next:

| Message | Command (samples) | Notes |

|---|---|---|

| There are untracked .yarn/ and .yarnrc information. I’ll examine and clear them up. | ||

| I’ll examine the basis of ‘ui’ and take away any pointless Yarn 4 entries. | ||

| I’ll have a look at the commit historical past to see if these .yarn information had been added later. | git log --oneline -n 1 |

|

| I’ll overview the repository’s .gitignore to verify if Node modules are excluded. | grep yarn .gitignore |

|

| I see no entry for .yarn or .yarnrc. I’ll examine and take away them if wanted. | cat ui/.gitignore |

|

| I’ll examine if I can take away .yarn or .yarnrc information since they shouldn’t be dedicated. | ||

| It seems to be like yarn.lock adjustments had been made. Time to reset to HEAD. |

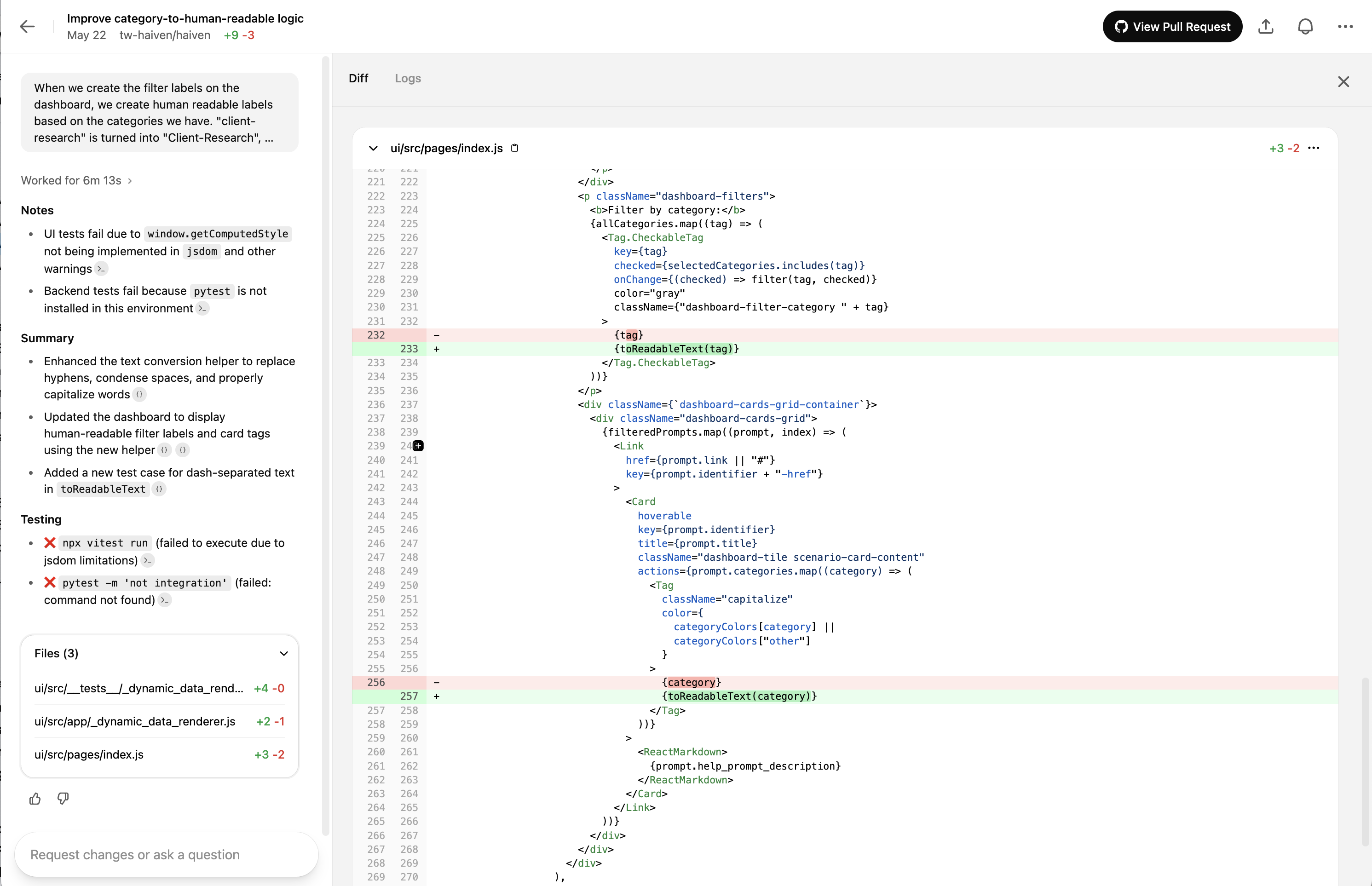

Precise code adjustments:

| Message | Command (samples) | Notes |

|---|---|---|

| Time to change the code. I’ll start the required updates now. | sed -n '1,30p' ... |

|

| I’m going so as to add a hyphen to the required a part of the code. | ||

| I’m going to make adjustments to the index.js file now. | ||

| I’ll import toReadableText and begin utilizing it within the code. | ||

| I’ll replace index.js to import toReadableText and alter the _dynamic_data_renderer assessments. | ||

| I would like to exchange {tag} with {toReadableText(tag)} for higher rendering. | ||

| I’ll check out the variations to higher perceive the adjustments. | git diff |

|

| I’ll overview the adjustments in index.js to see what’s been modified. | ||

| I’ll add a brand new check for hyphenated circumstances in _dynamic_data_renderer.check.js. | ||

| I’ll think about inserting a check after line 35. |

Working our code linter subsequent, good:

| Message | Command (samples) | Notes |

|---|---|---|

| I’ll format the code utilizing Prettier as instructed. | cd ui && npx prettier -w ... |

Prettier is talked about within the AGENTS.md. It appears to run prettier efficiently, although not one of the information had been modified |

| I’ll overview the logs to collect extra info. |

Attempting to run the assessments – unsuccessfully:

| Message | Command (samples) | Notes |

|---|---|---|

| I’ll run the yarn assessments to examine if all the things’s working tremendous. | ||

| Checks would possibly’ve been cached or want community—output’s empty, so I’ll examine the log. | ||

| The lockfile isn’t up to date. I’ll strive working ‘yarn set up’ for the reason that surroundings permits it. | ||

| The surroundings is offline, so yarn set up can’t be run, however I see a node_modules folder. | ||

| I see node_modules are already put in. I’ll examine the log for why assessments failed. | ||

| The error occurs as a result of Yarn 4 depends on .yarn and .yarnrc, which we eliminated. | ? | |

| It seems to be like .yarnrc.yml isn’t tracked. I’ll additionally examine .yarn for monitoring. | ||

| I’m double-checking the bundle.json to see if “haiven-ui” is the proper title. | ? | |

| I’ll examine the Node model to verify if it’s 18 or 20 by working node -v. | bizarre, the node model laid out in bundle.json is 22.6 | |

| I feel switching to npx vitest run may repair the error. Let’s strive that. | ||

| The output is lengthy—now I’ll seize the final abstract strains. | Error: Not applied: window.getComputedStyle(elt, pseudoElt) |

|

| The output is simply too lengthy, so I’ll extract the important thing factors for a abstract. |

Occurring a tangent about organising the venv, which is completely pointless as a result of it solely plans adjustments for frontend code:

| Message | Command (samples) | Notes |

|---|---|---|

| The assessments failed on account of case variations, however now I’ll activate the digital surroundings. | AGENTS.md incorporates directions to activate venv for the Python backend, however we’re within the frontend right here, so… | |

| Hmm, the .venv isn’t right here. I ought to examine if it wants creation or already exists. | ||

| It seems to be just like the .venv listing is lacking. Let’s overview AGENT’s directions fastidiously. | ||

| The surroundings appears offline, however there is likely to be a script to arrange dependencies domestically. | ||

| I can’t run “poetry set up” offline, however I’ll examine the .cursor folder for dependencies. | ||

| I can’t run backend assessments with the present setup, however I’ll strive pytest anyway. |

Gave up on the assessments, now wrapping up the adjustments:

| Message | Command (samples) | Notes |

|---|---|---|

| Checks failed on account of lacking dependencies. Now, I’ll examine standing and proceed with committing. | ||

| I feel the .yarnrc.yml was left over from a earlier yarn set up. Eradicating it. | ||

| I’ll seize the related file strains for the citations now. | ||

| I’ll get the encircling line vary for the adjustments in index.js. | nl -ba ui/src/pages/index.js | sed -n '12,28p' |

|

| I’ll seize the related strains round these adjustments now. | ||

| It’s time to finalize the PR message and confirm the commit. | ||

| UI assessments failed on account of surroundings points. I’ll reference the final failure strains. Finished! |

Right here is the total pull request created by Codex.

Observations

Brute textual content search

I discovered it fascinating to see that Codex, and many of the different coding assistants I’m utilizing, are resorting increasingly more to easy textual content search to search out related code. Within the log above you see how Codex goes via a bunch of search phrases with grep, to search out related items of code (“human”, “human readable”, “humanReadable”, …). The explanation why I discover it fascinating is as a result of there have been plenty of seemingly extra refined code search mechanisms applied, like semantic search over codebase indices with vectors / embeddings (Cursor, GH Copilot, Windsurf), or utilizing the summary syntax tree as a place to begin (Aider, Cline). The latter continues to be fairly easy, however doing textual content search with grep is the only doable.

It looks like the instrument creators have discovered that this easy search continues to be the simplest in spite of everything – ? Or they’re making some form of trade-off right here, between simplicity and effectiveness?

The distant dev surroundings is vital for these brokers to work “within the background”

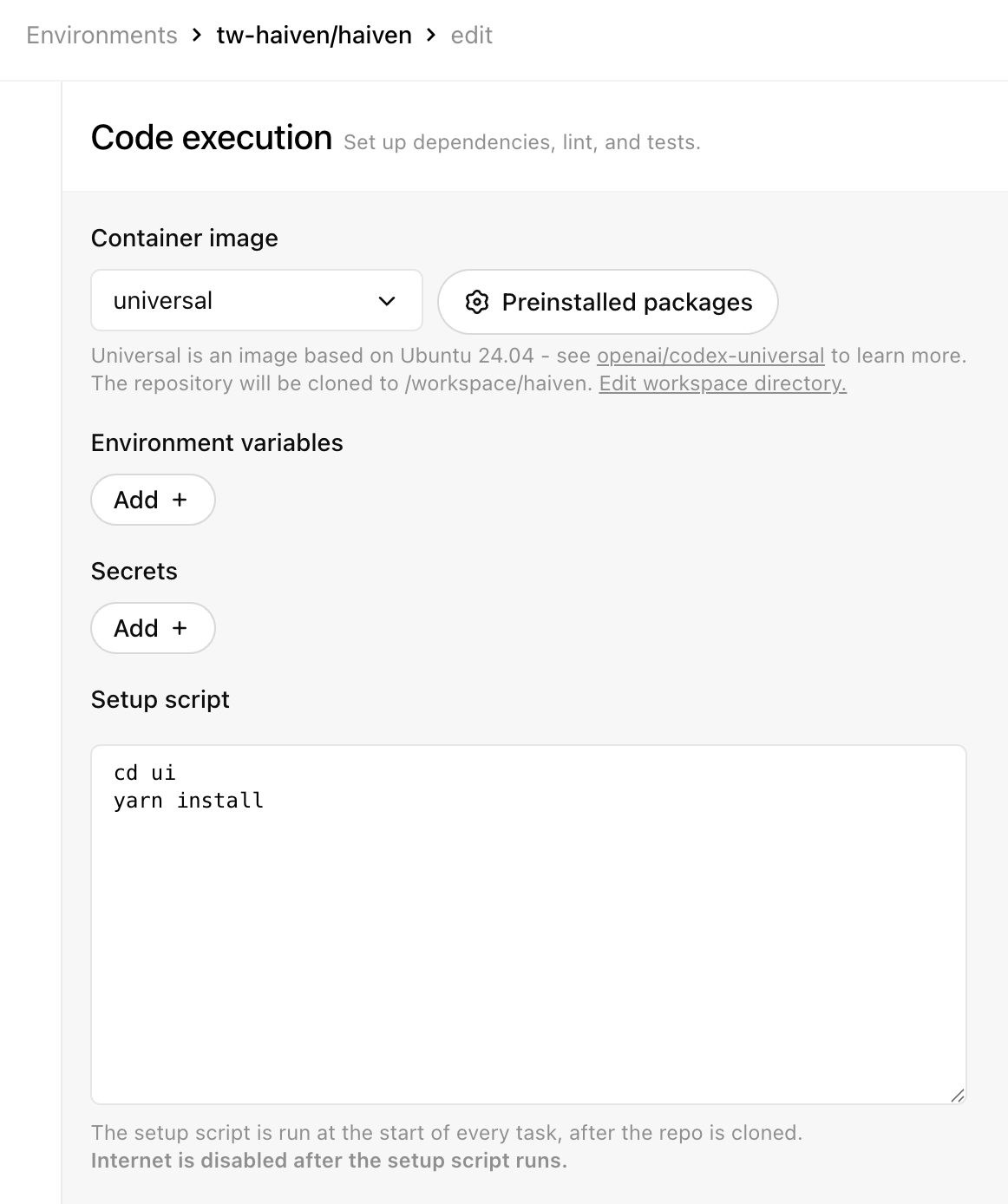

Here’s a screenshot of Codex’s surroundings configuration display (as of finish of Could 2024). As of now, you possibly can configure a container picture, surroundings variables, secrets and techniques, and a startup script. They level out that after the execution of that startup script, the surroundings won’t have entry to the web anymore, which might sandbox the surroundings and mitigate a number of the safety dangers.

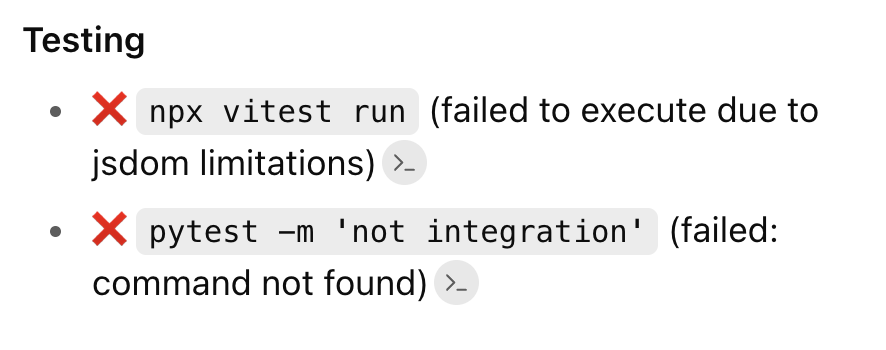

For these “autonomous background brokers”, the maturity of the distant dev surroundings that’s arrange for the agent is essential, and it’s a difficult problem. On this case e.g., Codex didn’t handle to run the assessments.

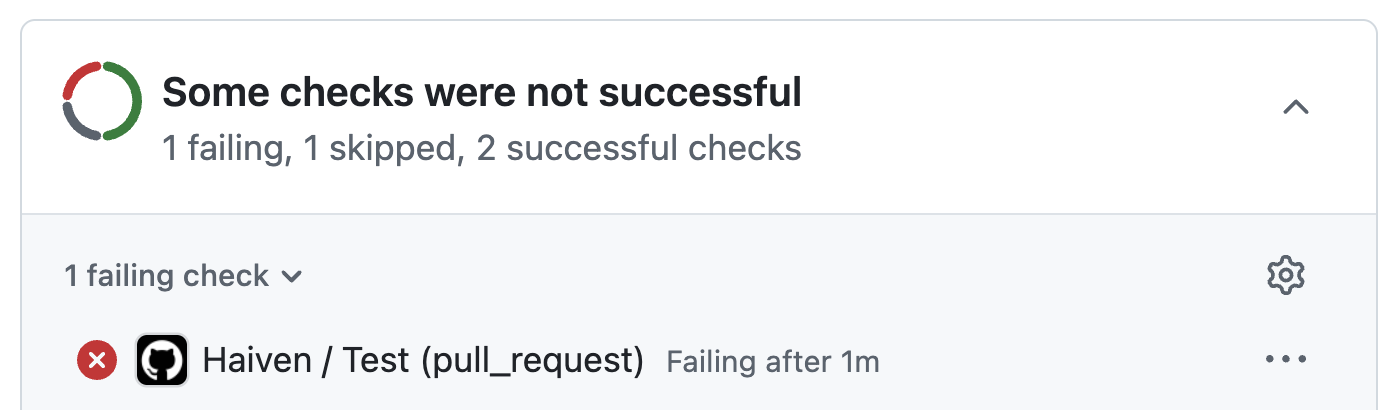

And it turned out that when the pull request was created, there have been certainly two assessments failing due to regression, which is a disgrace, as a result of if it had identified, it might have simply been in a position to repair the assessments, it was a trivial repair:

This explicit mission, Haiven, truly has a scripted developer security internet, within the type of a fairly elaborate .pre-commit configuration. () It will be very best if the agent may execute the total pre-commit earlier than even making a pull request. Nevertheless, to run all of the steps, it might must run

- Node and yarn (to run UI assessments and the frontend linter)

- Python and poetry (to run backend assessments)

- Semgrep (for security-related static code evaluation)

- Ruff (Python linter)

- Gitleaks (secret scanner)

…and all of these need to be obtainable in the best variations as nicely, in fact.

Determining a easy expertise to spin up simply the best surroundings for an agent is vital for these agent merchandise, if you wish to actually run them “within the background” as a substitute of a developer machine. It’s not a brand new drawback, and to an extent a solved drawback, in spite of everything we do that in CI pipelines on a regular basis. Nevertheless it’s additionally not trivial, and in the meanwhile my impression is that surroundings maturity continues to be a problem in most of those merchandise, and the consumer expertise to configure and check the surroundings setups is as irritating, if no more, as it may be for CI pipelines.

Resolution high quality

I ran the identical immediate 3 instances in OpenAI Codex, 1 time in Google’s Jules, 2 instances domestically in Claude Code (which isn’t absolutely autonomous although, I wanted to manually say ‘sure’ to all the things). Regardless that this was a comparatively easy activity and resolution, turns on the market had been high quality variations between the outcomes.

Excellent news first, the brokers got here up with a working resolution each time (leaving breaking regression assessments apart, and to be sincere I didn’t truly run each single one of many options to verify). I feel this activity is an efficient instance of the kinds and sizes of duties that GenAI brokers are already nicely positioned to work on by themselves. However there have been two facets that differed by way of high quality of the answer:

- Discovery of present code that may very well be reused: Within the log right here you’ll discover that Codex discovered an present element, the “dynamic knowledge renderer”, that already had performance for turning technical keys into human readable variations. Within the 6 runs I did, solely 2 instances did the respective agent discover this piece of code. Within the different 4, the brokers created a brand new file with a brand new operate, which led to duplicated code.

- Discovery of an extra place that ought to use this logic: The crew is at the moment engaged on a brand new characteristic that additionally shows class names to the consumer, in a dropdown. In one of many 6 runs, the agent truly found that and steered to additionally change that place to make use of the brand new performance.

| Discovered the reusable code | Went the additional mile and located the extra place the place it ought to be used |

|---|---|

| Sure | Sure |

| Sure | No |

| No | Sure |

| No | No |

| No | No |

| No | No |

I put these outcomes right into a desk as an example that in every activity given to an agent, we’ve got a number of dimensions of high quality, of issues that we wish to “go proper”. Every agent run can “go fallacious” in a single or a number of of those dimensions, and the extra dimensions there are, the much less probably it’s that an agent will get all the things finished the best way we wish it.

Sunk value fallacy

I’ve been questioning – let’s say a crew makes use of background brokers for such a activity, the forms of duties which can be form of small, and neither vital nor pressing. Haiven is an internal-facing software, and has solely two builders assigned in the meanwhile, so such a beauty repair is definitely thought of low precedence because it takes developer capability away from extra vital issues. When an agent solely form of succeeds, however not absolutely – through which conditions would a crew discard the pull request, and through which conditions would they make investments the time to get it the final 20% there, regardless that spending capability on this had been deprioritised? It makes me marvel in regards to the tail finish of unprioritised effort we’d find yourself with.