Actual-time buyer 360 functions are important in permitting departments inside an organization to have dependable and constant knowledge on how a buyer has engaged with the product and providers. Ideally, when somebody from a division has engaged with a buyer, you need up-to-date data so the shopper doesn’t get pissed off and repeat the identical data a number of instances to totally different individuals. Additionally, as an organization, you can begin anticipating the shoppers’ wants. It’s a part of constructing a stellar buyer expertise, the place clients wish to preserve coming again, and also you begin constructing buyer champions. Buyer expertise is a part of the journey of constructing loyal clients. To start out this journey, you could seize how clients have interacted with the platform: what they’ve clicked on, what they’ve added to their cart, what they’ve eliminated, and so forth.

When constructing a real-time buyer 360 app, you’ll undoubtedly want occasion knowledge from a streaming knowledge supply, like Kafka. You’ll additionally want a transactional database to retailer clients’ transactions and private data. Lastly, you could wish to mix some historic knowledge from clients’ prior interactions as properly. From right here, you’ll wish to analyze the occasion, transactional, and historic knowledge to be able to perceive their developments, construct personalised suggestions, and start anticipating their wants at a way more granular degree.

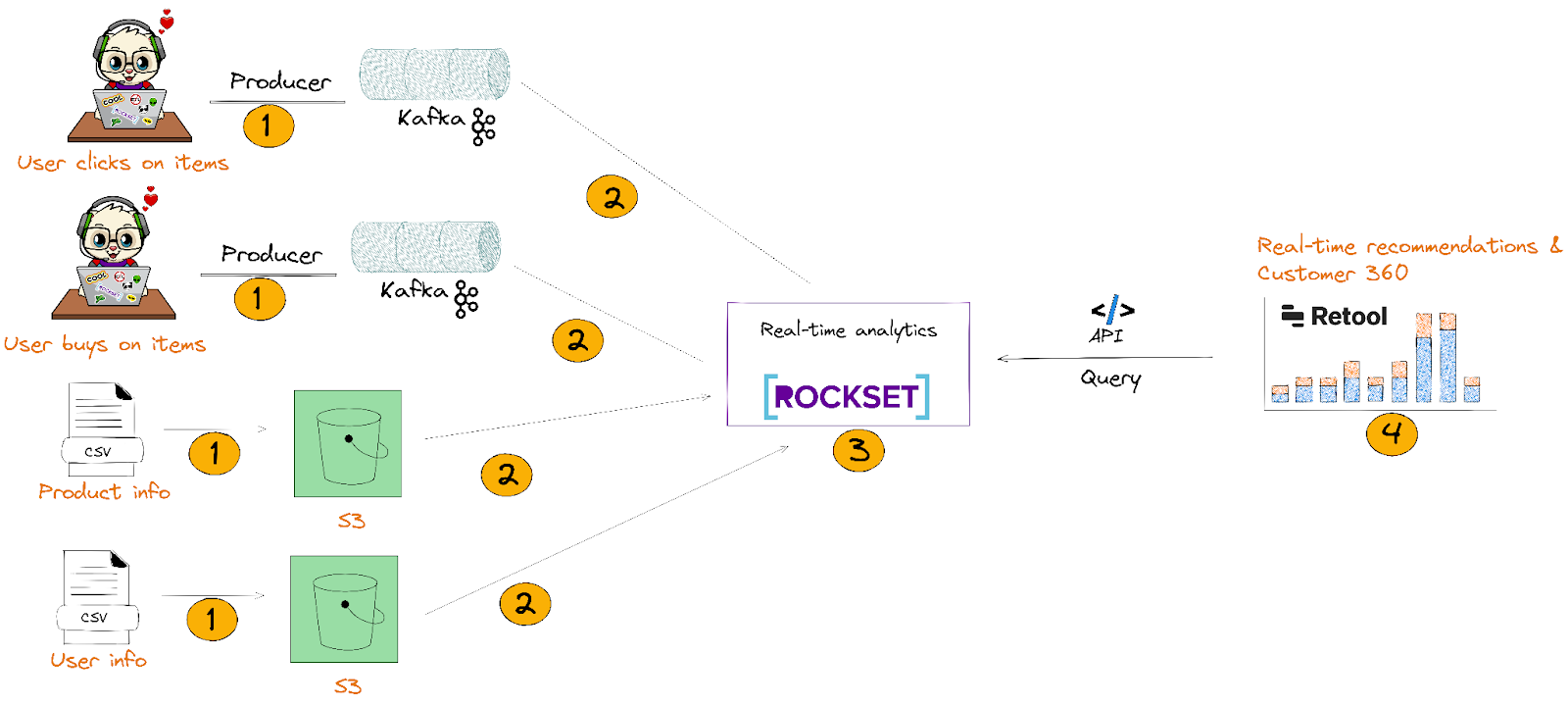

We’ll be constructing a primary model of this utilizing Kafka, S3, Rockset, and Retool. The thought right here is to point out you find out how to combine real-time knowledge with knowledge that’s static/historic to construct a complete real-time buyer 360 app that will get up to date inside seconds:

- We’ll ship clickstream and CSV knowledge to Kafka and AWS S3 respectively.

- We’ll combine with Kafka and S3 by way of Rockset’s knowledge connectors. This permits Rockset to mechanically ingest and index JSON i.e.nested semi-structured knowledge with out flattening it.

- Within the Rockset Question Editor, we’ll write advanced SQL queries that JOIN, mixture, and search knowledge from Kafka and S3 to construct real-time suggestions and buyer 360 profiles. From there, we’ll create knowledge APIs that’ll be utilized in Retool (step 4).

- Lastly, we’ll construct a real-time buyer 360 app with the interior instruments on Retool that’ll execute Rockset’s Question Lambdas. We’ll see the shopper’s 360 profile that’ll embrace their product suggestions.

Key necessities for constructing a real-time buyer 360 app with suggestions

Streaming knowledge supply to seize buyer’s actions: We’ll want a streaming knowledge supply to seize what grocery gadgets clients are clicking on, including to their cart, and far more. We’re working with Kafka as a result of it has a excessive fanout and it’s straightforward to work with many ecosystems.

Actual-time database that handles bursty knowledge streams: You want a database that separates ingest compute, question compute, and storage. By separating these providers, you may scale the writes independently from the reads. Sometimes, should you couple compute and storage, excessive write charges can sluggish the reads, and reduce question efficiency. Rockset is likely one of the few databases that separate ingest and question compute, and storage.

Actual-time database that handles out-of-order occasions: You want a mutable database to replace, insert, or delete information. Once more, Rockset is likely one of the few real-time analytics databases that avoids costly merge operations.

Inner instruments for operational analytics: I selected Retool as a result of it’s straightforward to combine and use APIs as a useful resource to show the question outcomes. Retool additionally has an automated refresh, the place you may regularly refresh the interior instruments each second.

Let’s construct our app utilizing Kafka, S3, Rockset, and Retool

So, concerning the knowledge

Occasion knowledge to be despatched to Kafka

In our instance, we’re constructing a suggestion of what grocery gadgets our consumer can take into account shopping for. We created 2 separate occasion knowledge in Mockaroo that we’ll ship to Kafka:

-

user_activity_v1

- That is the place customers add, take away, or view grocery gadgets of their cart.

-

user_purchases_v1

- These are purchases made by the shopper. Every buy has the quantity, a listing of things they purchased, and the kind of card they used.

You may learn extra about how we created the information set within the workshop.

S3 knowledge set

We have now 2 public buckets:

Ship occasion knowledge to Kafka

The best technique to get arrange is to create a Confluent Cloud cluster with 2 Kafka subjects:

- user_activity

- user_purchases

Alternatively, you could find directions on find out how to arrange the cluster within the Confluent-Rockset workshop.

You’ll wish to ship knowledge to the Kafka stream by modifying this script on the Confluent repo. In my workshop, I used Mockaroo knowledge and despatched that to Kafka. You may observe the workshop hyperlink to get began with Mockaroo and Kafka!

S3 public bucket availability

The two public buckets are already out there. Once we get to the Rockset portion, you may plug within the S3 URI to populate the gathering. No motion is required in your finish.

Getting began with Rockset

You may observe the directions on creating an account.

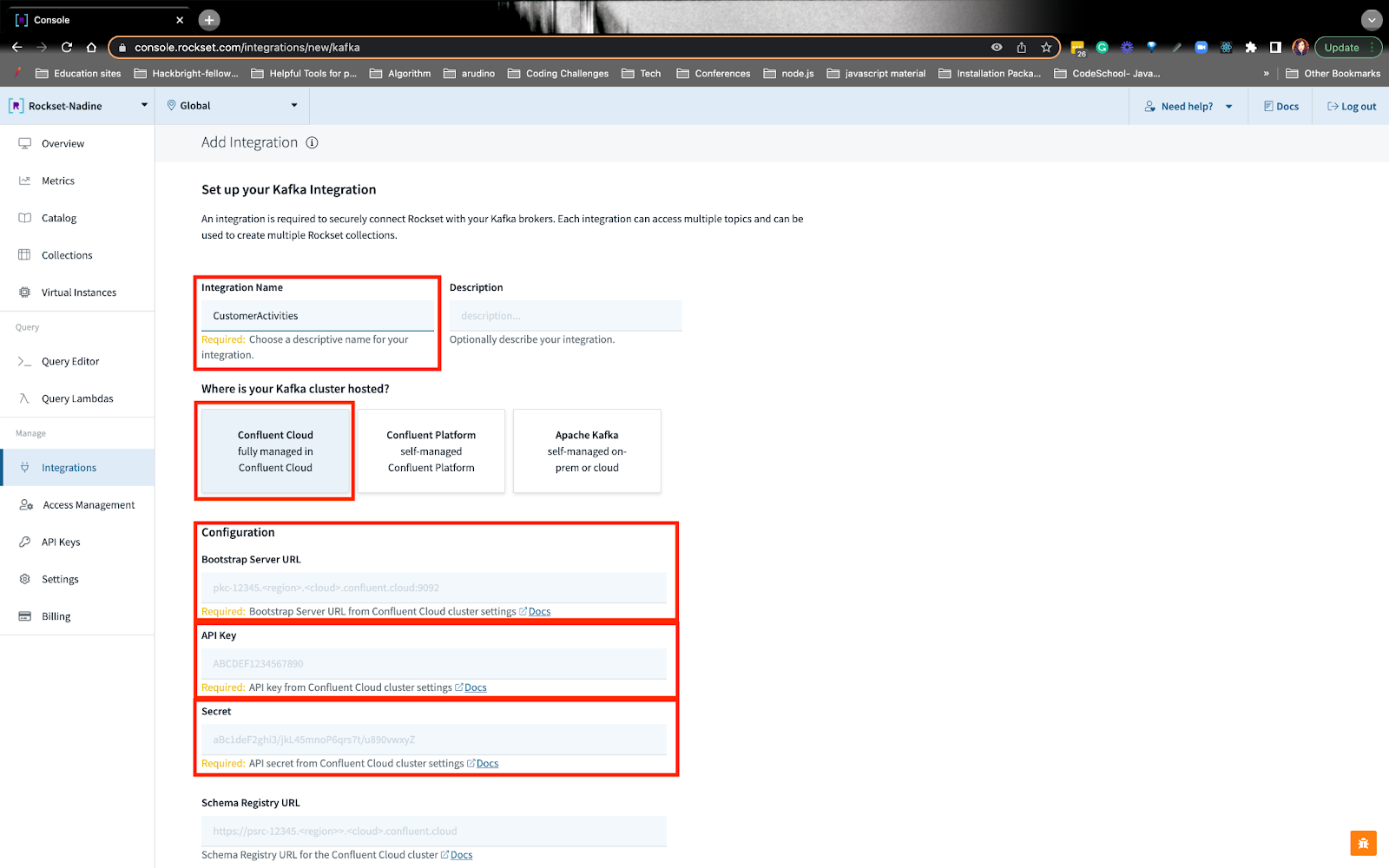

Create a Confluent Cloud integration on Rockset

To ensure that Rockset to learn the information from Kafka, you must give it learn permissions. You may observe the directions on creating an integration to the Confluent Cloud cluster. All you’ll have to do is plug within the bootstrap-url and API keys:

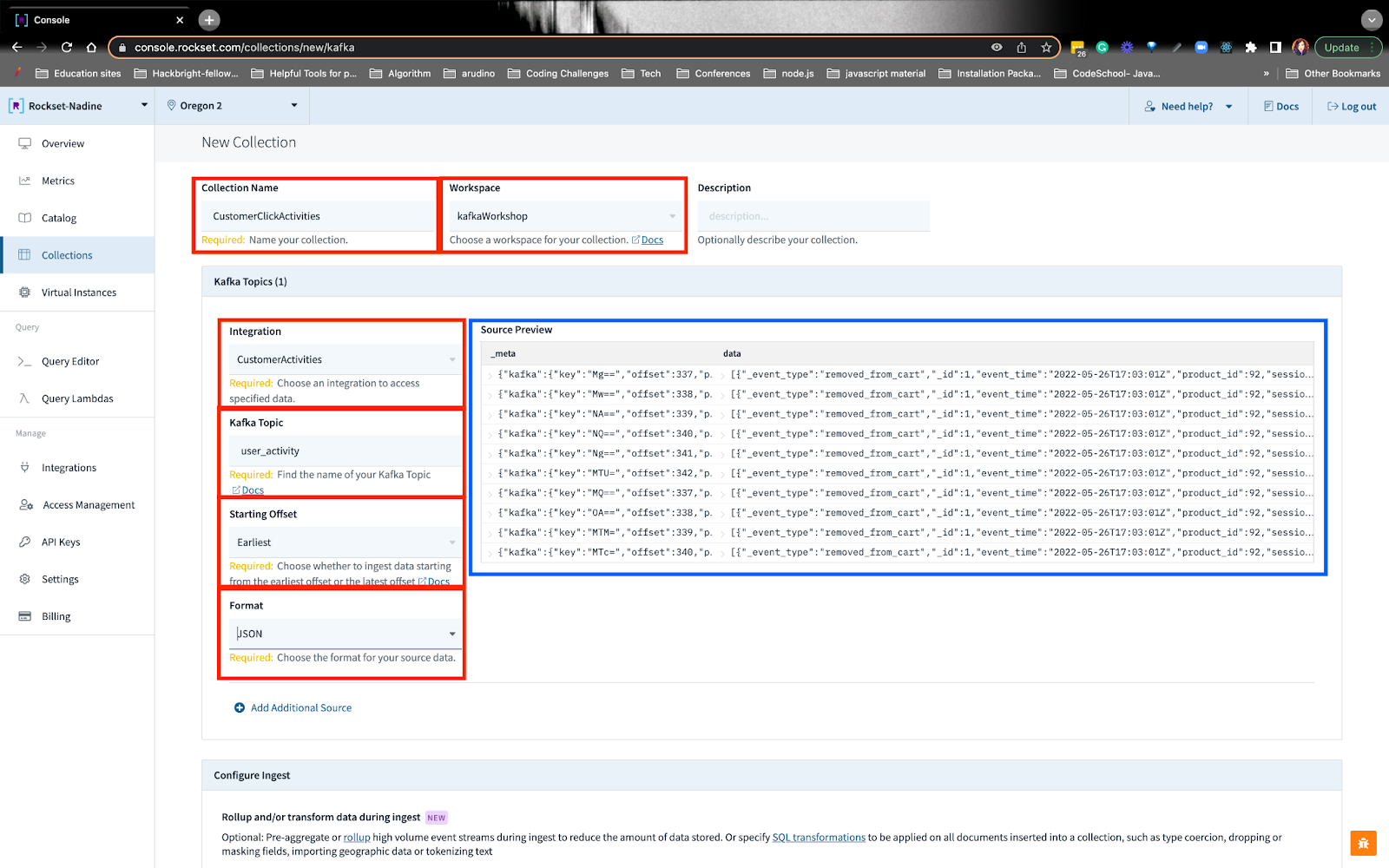

Create Rockset collections with reworked Kafka and S3 knowledge

For the Kafka knowledge supply, you’ll put within the integration identify we created earlier, subject identify, offset, and format. While you do that, you’ll see the preview.

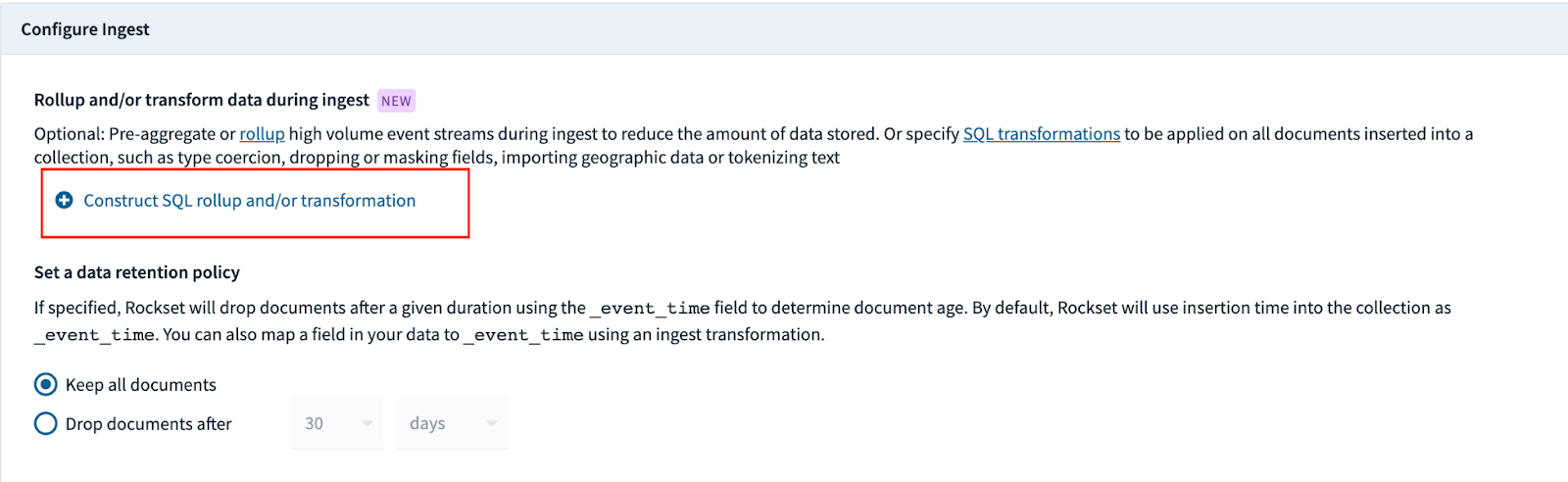

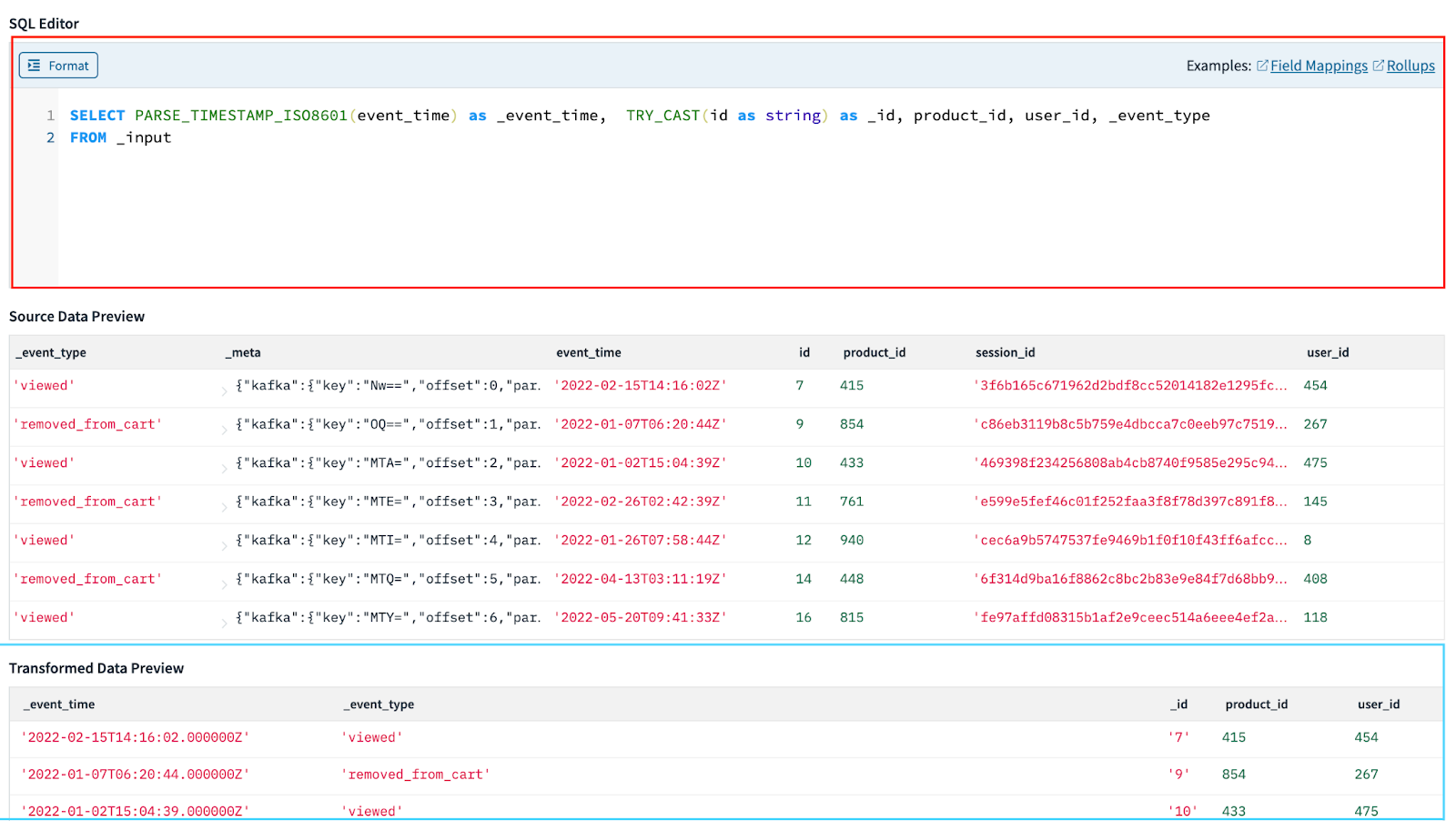

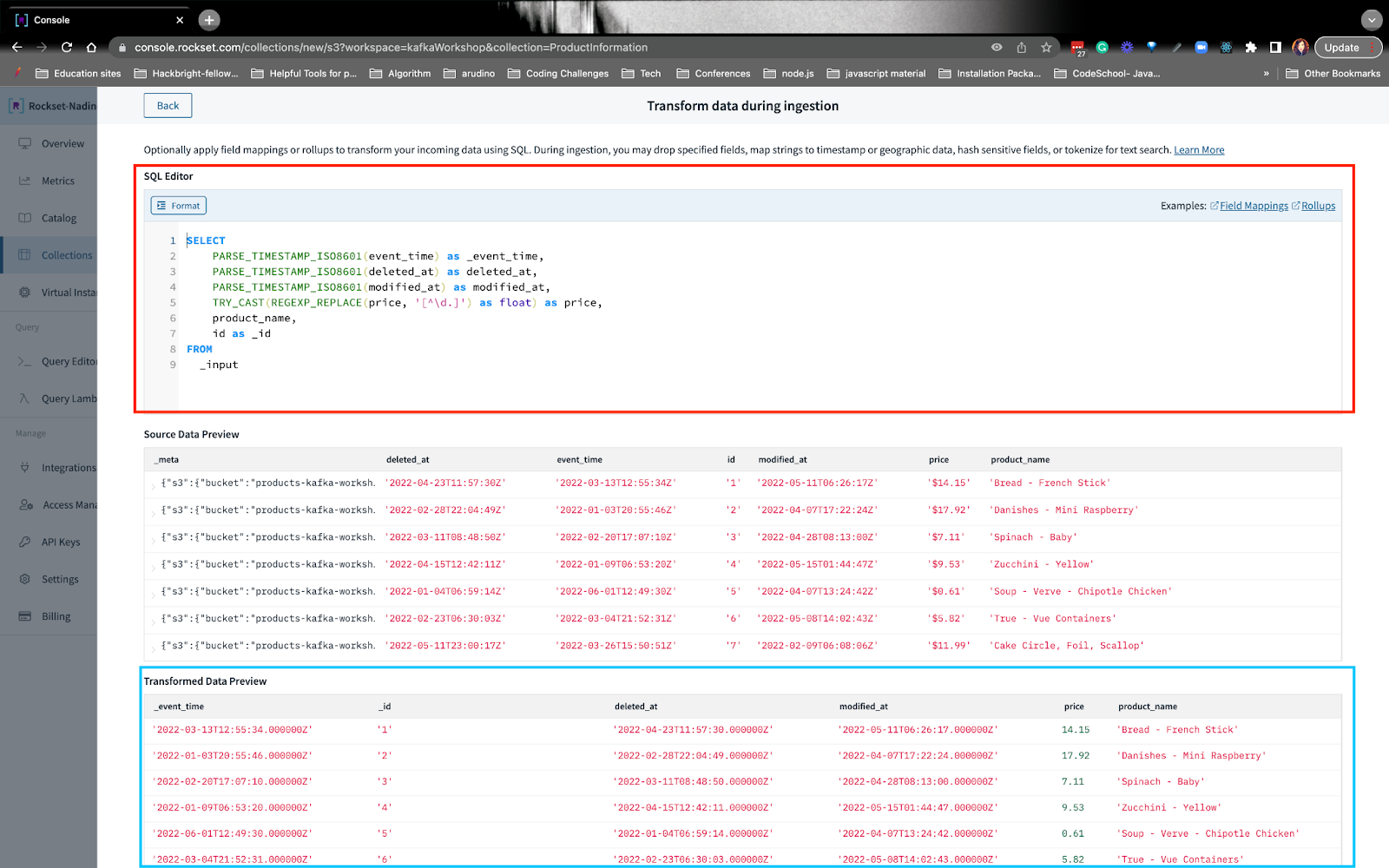

In the direction of the underside of the gathering, there’s a piece the place you may rework knowledge as it’s being ingested into Rockset:

From right here, you may write SQL statements to remodel the information:

On this instance, I wish to level out that we’re remapping occasiontime to occasiontime. Rockset associates a timestamp with every doc in a subject named occasiontime. If an event_time just isn’t offered if you insert a doc, Rockset supplies it because the time the information was ingested as a result of queries on this subject are considerably sooner than comparable queries on regularly-indexed fields.

While you’re executed writing the SQL transformation question, you may apply the transformation and create the gathering.

We’re going to even be reworking the Kafka subject user_purchases, in a similar way I simply defined right here. You may observe for extra particulars on how we reworked and created the gathering from these Kafka subjects.

S3

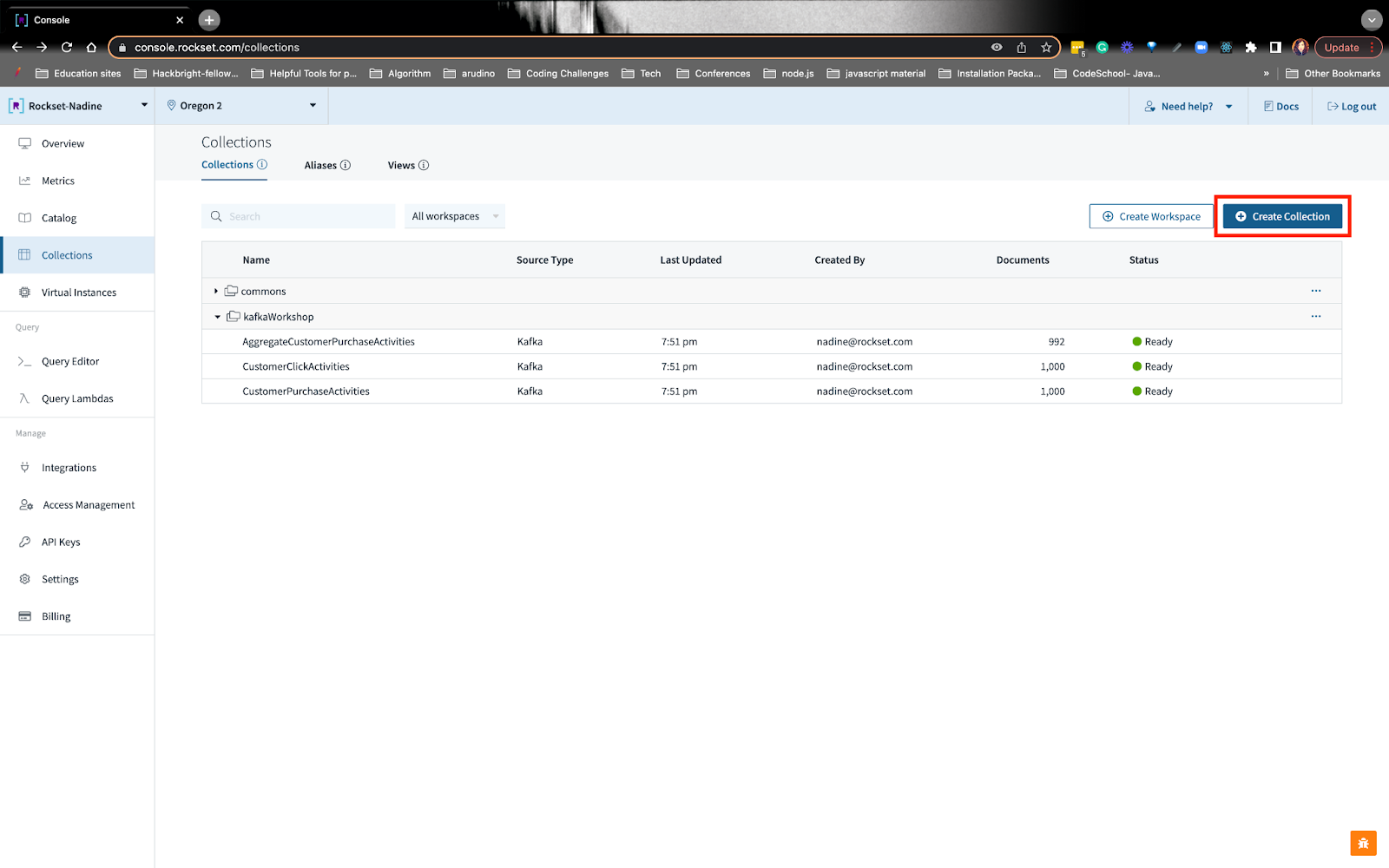

To get began with the general public S3 bucket, you may navigate to the collections tab and create a set:

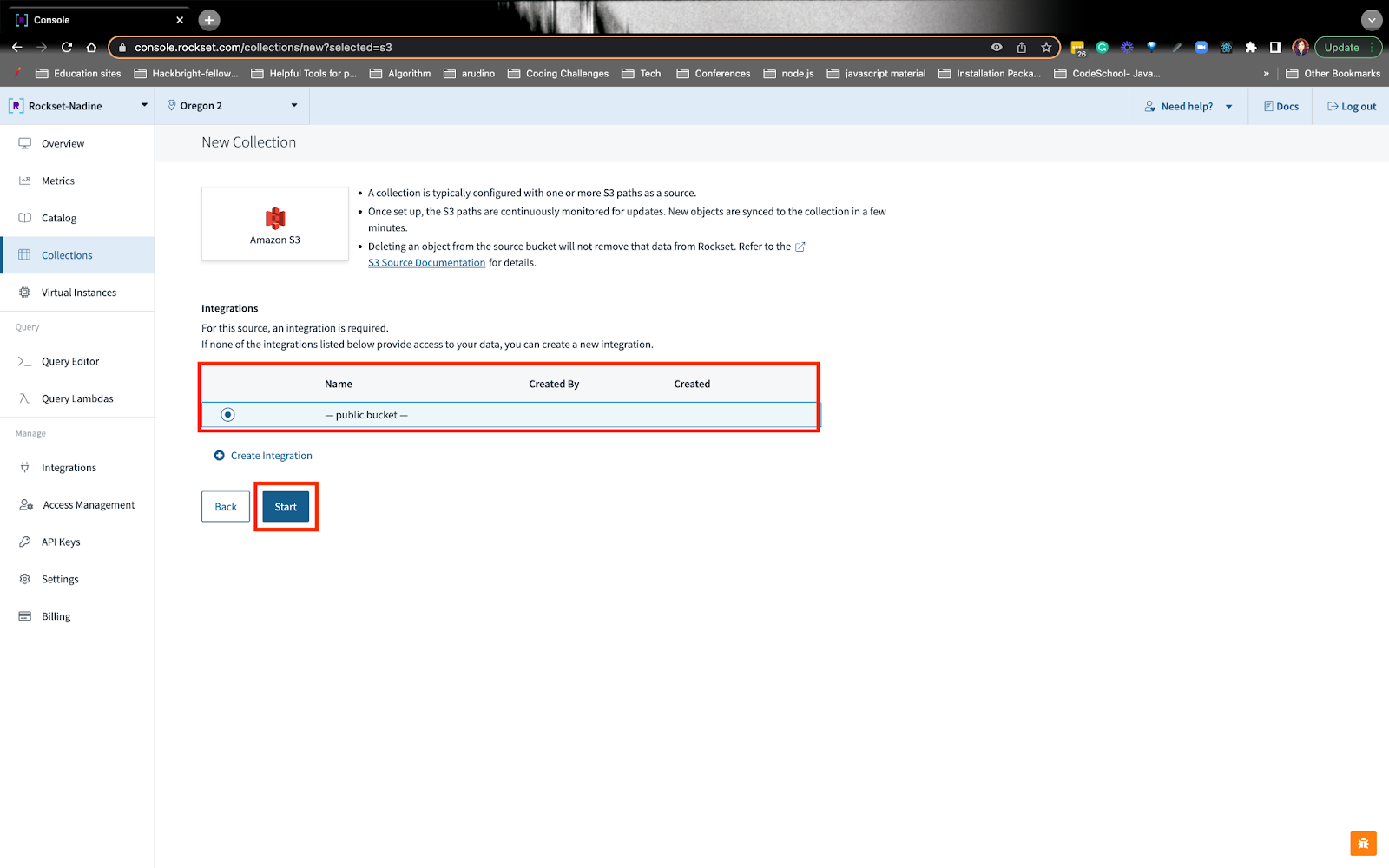

You may select the S3 choice and choose the general public S3 bucket:

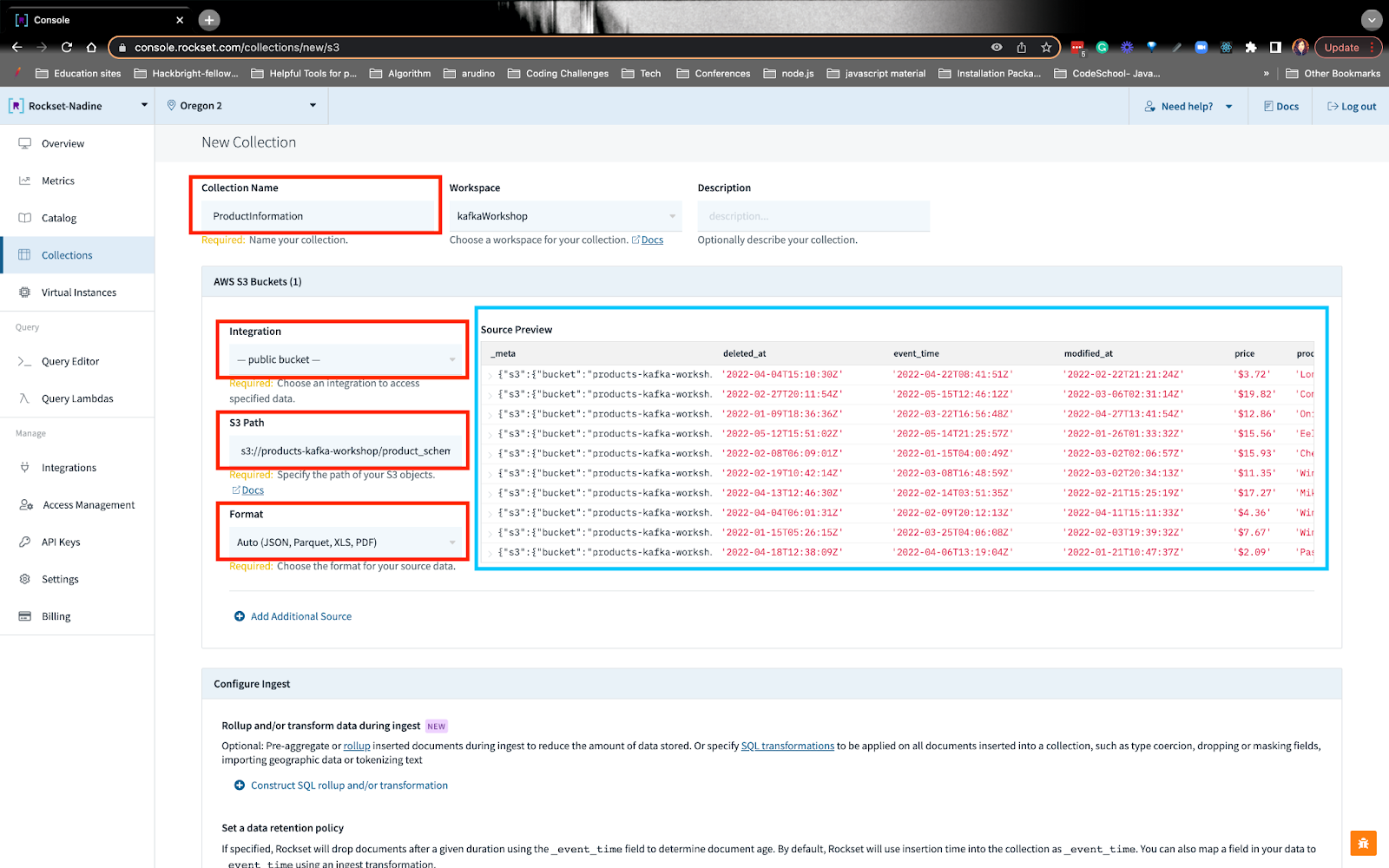

From right here, you may fill within the particulars, together with the S3 path URI and see the supply preview:

Just like earlier than, we will create SQL transformations on the S3 knowledge:

You may observe how we wrote the SQL transformations.

Construct a real-time suggestion question on Rockset

When you’ve created all of the collections, we’re prepared to jot down our suggestion question! Within the question, we wish to construct a suggestion of things primarily based on the actions since their final buy. We’re constructing the advice by gathering different gadgets customers have bought together with the merchandise the consumer was desirous about since their final buy.

You may observe precisely how we construct this question. I’ll summarize the steps under.

Step 1: Discover the consumer’s final buy date

We’ll have to order their buy actions in descending order and seize the most recent date. You’ll discover on line 8 we’re utilizing a parameter :userid. Once we make a request, we will write the userid we would like within the request physique.

Step 2: Seize the shopper’s newest actions since their final buy

Right here, we’re writing a CTE, frequent desk expression, the place we will discover the actions since their final buy. You’ll discover on line 24 we’re solely within the exercise _eventtime that’s higher than the acquisition event_time.

Step 3: Discover earlier purchases that include the shopper’s gadgets

We’ll wish to discover all of the purchases that different individuals have purchased, that include the shopper’s gadgets. From right here we will see what gadgets our buyer will possible purchase. The important thing factor I wish to level out is on line 44: we use ARRAY_CONTAINS() to seek out the merchandise of curiosity and see what different purchases have this merchandise.

Step 4: Mixture all of the purchases by unnesting an array

We’ll wish to see the gadgets which were bought together with the shopper’s merchandise of curiosity. In step 3, we obtained an array of all of the purchases, however we will’t mixture the product IDs simply but. We have to flatten the array after which mixture the product IDs to see which product the shopper might be desirous about. On line 52 we UNNEST() the array and on line 49 we COUNT(*) on what number of instances the product ID reoccurs. The highest product IDs with essentially the most depend, excluding the product of curiosity, are the gadgets we will suggest to the shopper.

Step 5: Filter outcomes so it would not include the product of curiosity

On line 63-69 we filter out the shopper’s product of curiosity through the use of NOT IN().

Step 6: Determine the product ID with the product identify

Product IDs can solely go so far- we have to know the product names so the shopper can search by way of the e-commerce web site and probably add it to their cart. On line 77 we use be a part of the S3 public bucket that comprises the product data with the Kafka knowledge that comprises the acquisition data by way of the product IDs.

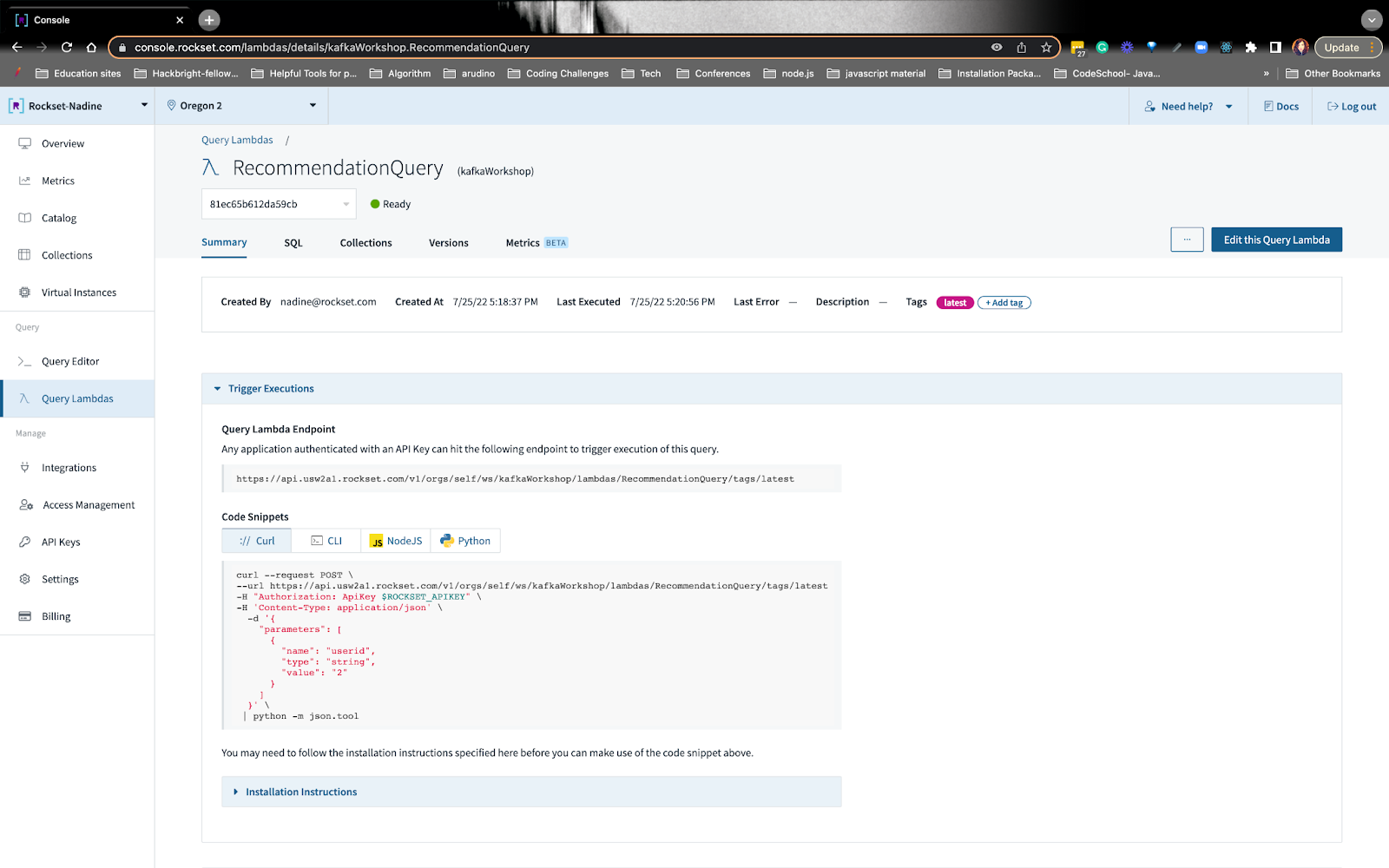

Step 7: Create a Question Lambda

On the Question Editor, you may flip the advice question into an API endpoint. Rockset mechanically generates the API level, and it’ll seem like this:

We’re going to make use of this endpoint on Retool.

That wraps up the advice question! We wrote another queries which you could discover on the workshop web page, like getting the consumer’s common buy worth and whole spend!

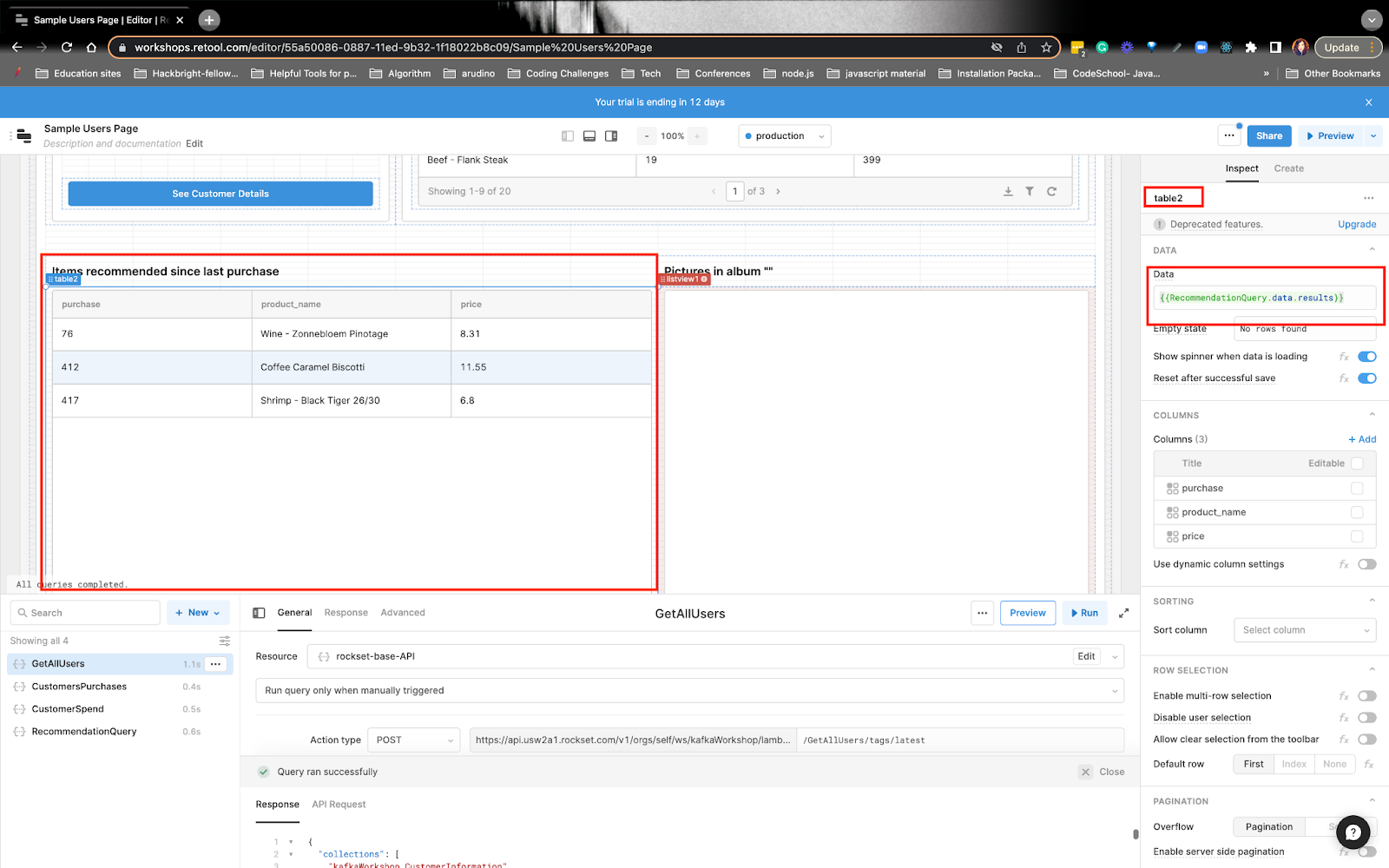

End constructing the app in Retool with knowledge from Rockset

Retool is nice for constructing inside instruments. Right here, customer support brokers or different group members can simply entry the information and help clients. The information that’ll be displayed on Retool might be coming from the Rockset queries we wrote. Anytime Retool sends a request to Rockset, Rockset returns the outcomes, and Retool shows the information.

You may get the complete scoop on how we are going to construct on Retool.

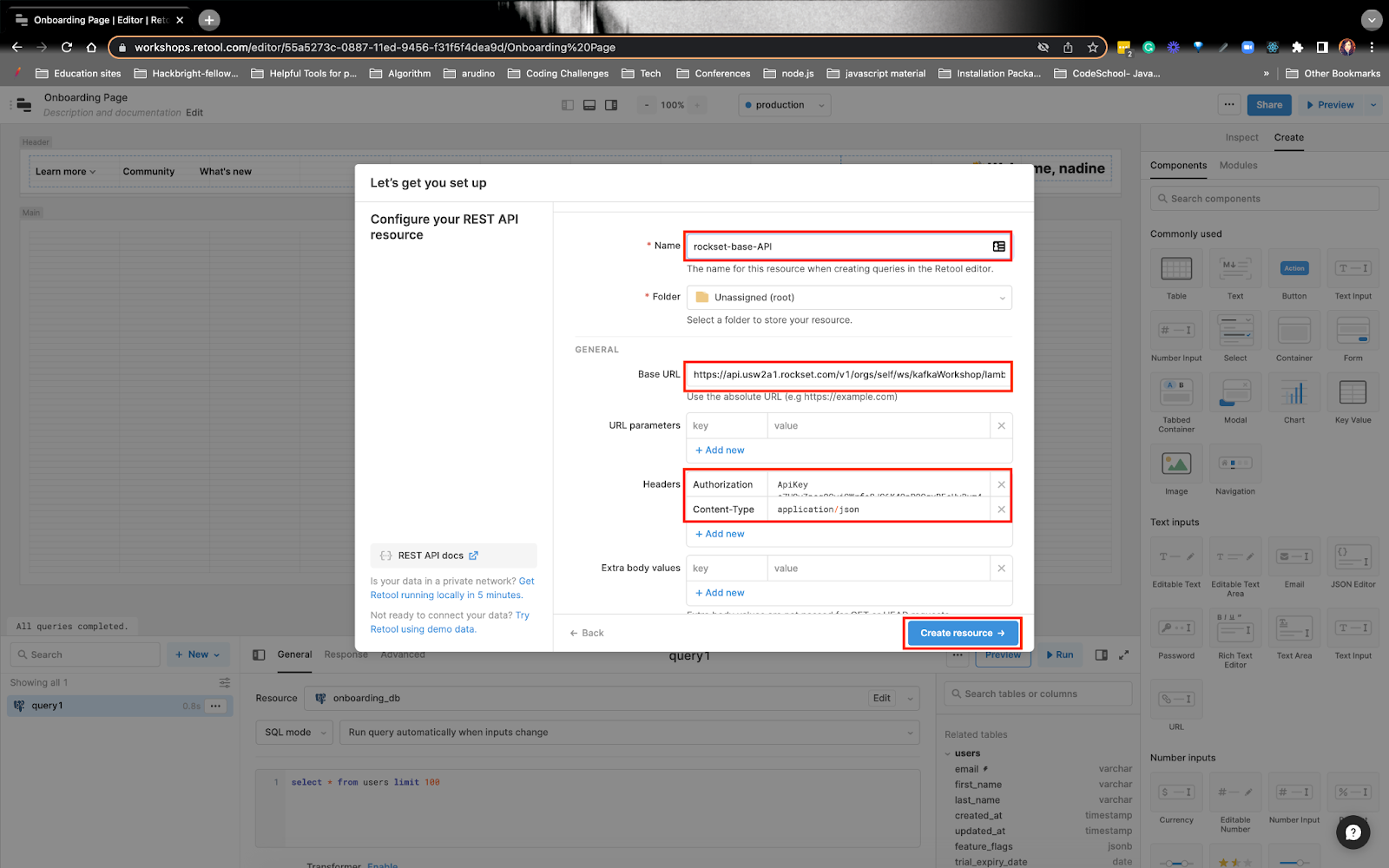

When you create your account, you’ll wish to arrange the useful resource endpoint. You’ll wish to select the API choice and arrange the useful resource:

You’ll wish to give the useful resource a reputation, right here I named it rockset-base-API.

You’ll see beneath the Base URL, I put the Question Lambda endpoint as much as the lambda portion – I didn’t put the entire endpoint. Instance:

Below Headers, I put the Authorization and Content material-Sort values.

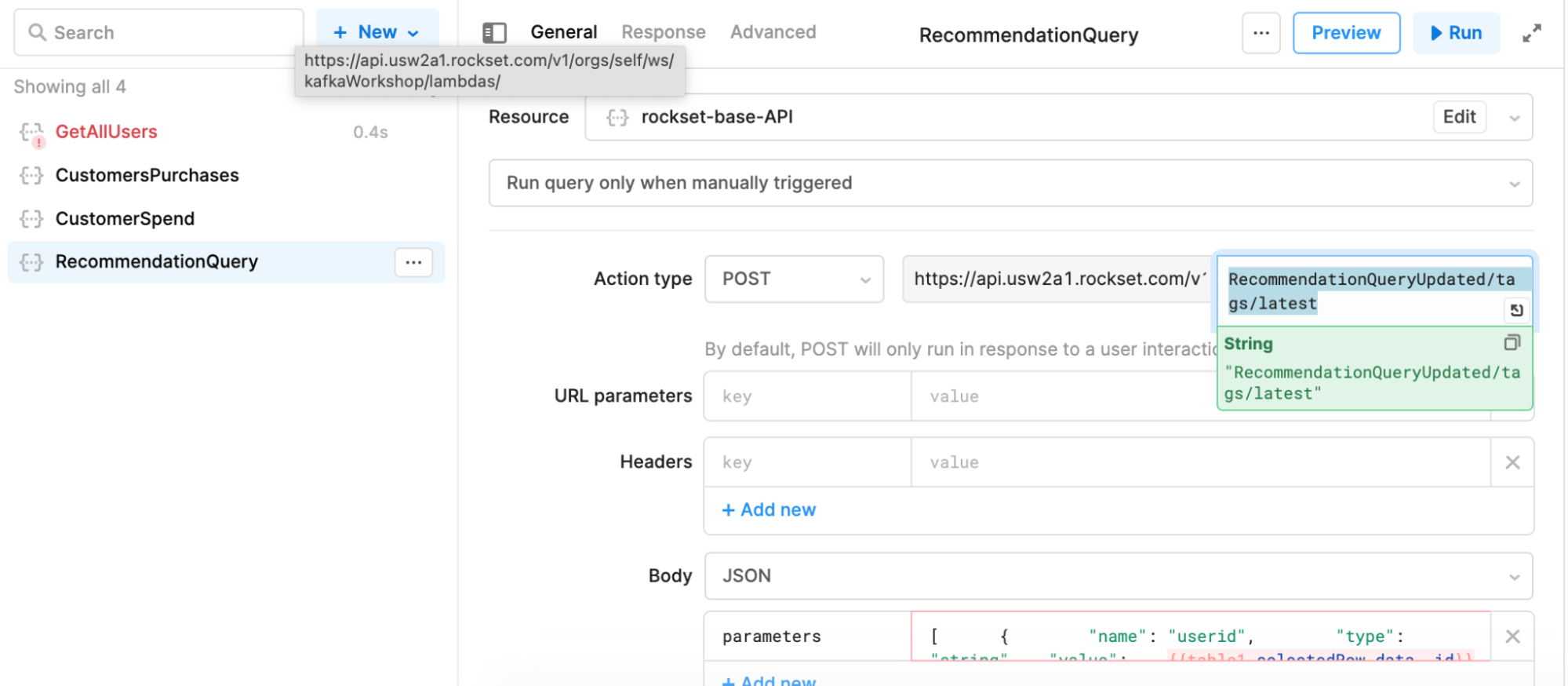

Now, you’ll have to create the useful resource question. You’ll wish to select the rockset-base-API because the useful resource and on the second half of the useful resource, you’ll put the whole lot else that comes after lambdas portion. Instance:

- RecommendationQueryUpdated/tags/newest

Below the parameters part, you’ll wish to dynamically replace the userid.

After you create the useful resource, you’ll wish to add a desk UI part and replace it to replicate the consumer’s suggestion:

You may observe how we constructed the real-time buyer app on Retool.

This wraps up how we constructed a real-time buyer 360 app with Kafka, S3, Rockset, and Retool. When you’ve got any questions or feedback, undoubtedly attain out to the Rockset Group.